In this question you will implement a Naive Bayes classifier for a text classification problem. You...

Fantastic news! We've Found the answer you've been seeking!

Question:

Transcribed Image Text:

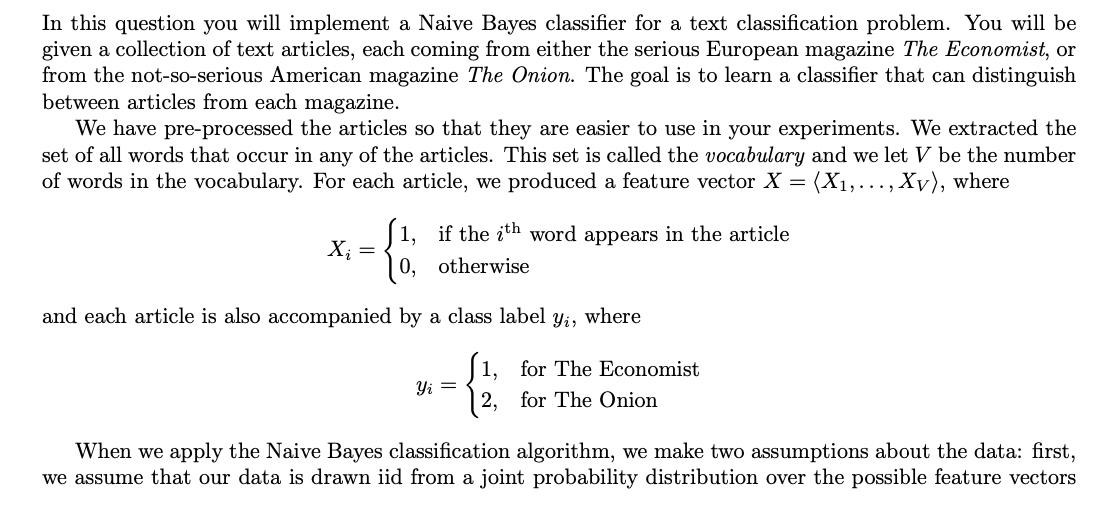

In this question you will implement a Naive Bayes classifier for a text classification problem. You will be given a collection of text articles, each coming from either the serious European magazine The Economist, or from the not-so-serious American magazine The Onion. The goal is to learn a classifier that can distinguish between articles from each magazine. We have pre-processed the articles so that they are easier to use in your experiments. We extracted the set of all words that occur in any of the articles. This set is called the vocabulary and we let V be the number of words in the vocabulary. For each article, we produced a feature vector X = (X₁,..., Xv), where if the ith word appears in the article otherwise X₁ = 0, and each article is also accompanied by a class label yi, where 1, for The Economist 2, for The Onion Yi = When we apply the Naive Bayes classification algorithm, we make two assumptions about the data: first, we assume that our data is drawn iid from a joint probability distribution over the possible feature vectors X and the corresponding class labels Y; second, we assume for each pair of features X, and X, with i ‡ j that X, is conditionally independent of X, given the class label Y (this is the Naive Bayes assumption). Under these assumptions, a natural classification rule is as follows: Given a new input X, predict the most probable class label Ŷ given X. Formally, Using Bayes Rule and the Naive Bayes assumption, we can rewrite this classification rule as follows: P(X|Y = y)P(Y = y) P(X) (Bayes Rule) (Denominator does not depend on y) Ŷ = argmax Y = argmax P(X|Y = y)P(Y = y) Y Y = argmax P(Y = y|X). Y = argmax P(X₁,..., Xv|Y = y)P(Y = y) Y = argmax Y V IP(Xw|Y: = y)) P(Y (Conditional independence). w=1 Of course, since we don't know the true joint distribution over feature vectors X and class labels Y, we need to estimate the probabilities P(X|Y = y) and P(Y = y) from the training data. For each word index w € {1, ..., V} and class label y ≤ {1,2}, the distribution of X given Y = y is a Bernoulli distribution with parameter Øyw. In other words, there is some unknown number Oyw such that P(Y = y) P(X₂ = 1|Y = y) = Oyw P(Xw = 0|Y = y) = 1 - 0yw. We believe that there is a non-zero (but maybe very small) probability that any word in the vocabulary can appear in an article from either The Onion or The Economist. To make sure that our estimated probabilities are always non-zero, we will impose a Beta (2,1) prior on yw and compute the MAP estimate from the training data. Similarly, the distribution of Y (when we consider it alone) is a Bernoulli distribution with parameter p. In other words, there is some unknown number p such that P(Y = 1) = p P(Y=2) = 1 p. In this case, since we have many examples of articles from both The Economist and The Onion, there is no risk of having zero-probability estimates, so we will instead use the MLE for the distribution of Y. Questions 1. What would be the MAP estimate of Oyw = P(Xw = 1|Y = y) with a Beta(2,1) prior distribution? 2. What would be the MLE estimate for the prior, p = P(Y = 1)? [14 points] [8 points] [4 points] 3. Given Oyu, what would be the value of P(XW|Y = y)? 4. How would you classify a test example? Write the classification rule equation for a new test sample Xtest using yw and p? [8 points] 5. How do you think the train and test error would compare? Explain any significant differences. Hint: You can try to implement Naive Bayes for this question using python libraries and by finding a similar dataset. [4 points] 6. If we have less training data for the same problem, does the prior have more or less impact on our classifier? Explain any possible difference between the train and test error in this question and when we used the whole data. [6 points] In this question you will implement a Naive Bayes classifier for a text classification problem. You will be given a collection of text articles, each coming from either the serious European magazine The Economist, or from the not-so-serious American magazine The Onion. The goal is to learn a classifier that can distinguish between articles from each magazine. We have pre-processed the articles so that they are easier to use in your experiments. We extracted the set of all words that occur in any of the articles. This set is called the vocabulary and we let V be the number of words in the vocabulary. For each article, we produced a feature vector X = (X₁,..., Xv), where if the ith word appears in the article otherwise X₁ = 0, and each article is also accompanied by a class label yi, where 1, for The Economist 2, for The Onion Yi = When we apply the Naive Bayes classification algorithm, we make two assumptions about the data: first, we assume that our data is drawn iid from a joint probability distribution over the possible feature vectors X and the corresponding class labels Y; second, we assume for each pair of features X, and X, with i ‡ j that X, is conditionally independent of X, given the class label Y (this is the Naive Bayes assumption). Under these assumptions, a natural classification rule is as follows: Given a new input X, predict the most probable class label Ŷ given X. Formally, Using Bayes Rule and the Naive Bayes assumption, we can rewrite this classification rule as follows: P(X|Y = y)P(Y = y) P(X) (Bayes Rule) (Denominator does not depend on y) Ŷ = argmax Y = argmax P(X|Y = y)P(Y = y) Y Y = argmax P(Y = y|X). Y = argmax P(X₁,..., Xv|Y = y)P(Y = y) Y = argmax Y V IP(Xw|Y: = y)) P(Y (Conditional independence). w=1 Of course, since we don't know the true joint distribution over feature vectors X and class labels Y, we need to estimate the probabilities P(X|Y = y) and P(Y = y) from the training data. For each word index w € {1, ..., V} and class label y ≤ {1,2}, the distribution of X given Y = y is a Bernoulli distribution with parameter Øyw. In other words, there is some unknown number Oyw such that P(Y = y) P(X₂ = 1|Y = y) = Oyw P(Xw = 0|Y = y) = 1 - 0yw. We believe that there is a non-zero (but maybe very small) probability that any word in the vocabulary can appear in an article from either The Onion or The Economist. To make sure that our estimated probabilities are always non-zero, we will impose a Beta (2,1) prior on yw and compute the MAP estimate from the training data. Similarly, the distribution of Y (when we consider it alone) is a Bernoulli distribution with parameter p. In other words, there is some unknown number p such that P(Y = 1) = p P(Y=2) = 1 p. In this case, since we have many examples of articles from both The Economist and The Onion, there is no risk of having zero-probability estimates, so we will instead use the MLE for the distribution of Y. Questions 1. What would be the MAP estimate of Oyw = P(Xw = 1|Y = y) with a Beta(2,1) prior distribution? 2. What would be the MLE estimate for the prior, p = P(Y = 1)? [14 points] [8 points] [4 points] 3. Given Oyu, what would be the value of P(XW|Y = y)? 4. How would you classify a test example? Write the classification rule equation for a new test sample Xtest using yw and p? [8 points] 5. How do you think the train and test error would compare? Explain any significant differences. Hint: You can try to implement Naive Bayes for this question using python libraries and by finding a similar dataset. [4 points] 6. If we have less training data for the same problem, does the prior have more or less impact on our classifier? Explain any possible difference between the train and test error in this question and when we used the whole data. [6 points]

Expert Answer:

Answer rating: 100% (QA)

1 The MAP estimate of PX1Yy with a Beta21 prior distribution would be hatpX1Yy fracX1y 2 1Ny 22 1 2 ... View the full answer

Related Book For

Posted Date:

Students also viewed these programming questions

-

Assignment 5: Hash Table implementation andconcordance There are three parts to this assignment. In the first two parts,you will complete the implementation of a hash map and aconcordance program. In...

-

Make a presentation about marketing: Your Companys marketing department promotes the products and interacts with the customers, sales force, and supply chain. They are also in charge of forecasting...

-

In sports betting, Las Vegas sports books establish winning margins for a team that is favored to win a game. An individual can place a wager on the game and will win if the team bet upon wins after...

-

Rotate the axes so that the new equation contains no xy-term. Analyze and graph the new equation. x 2 + 4xy + 4y 2 + 165x 85y = 0

-

A contract is created to refurbish a luxury yacht: new color schemes, new furniture, new wall and floor coverings, new light fixtures, and window treatmentsthe whole works. Of course, it is not just...

-

(Three Differences, Compute Taxable Income, Entry for Taxes) Havaci Company reports pretax financial income of $80,000 for 2010. The following items cause taxable income to be different than pretax...

-

(20%) A Fabry-Perot resonant cavity consists of a thin glass plate that has a refractive index of n = 1.50 and a thickness of = 100 m. Its surfaces are coated such that its peak transmittance is 100%...

-

Assume that on 1/1/20 Grace acquired 80% of Smith for $440,000 when Smith's equity included $200,000 of retained earnings and $200,000 of capital stock. An appraisal of Smith's assets could not...

-

Discuss how organizations can use group dynamics in the current HR context

-

Racie will receive payments of $550 a month for ten years. What are these payments worth today at a discount rate of 6 percent, compounded monthly?

-

1. What is the present value of $1,552.90 due in 10 years at (1) a 12 percent discount rate and (2) a 6 percent rate? 2. To the closest year, how long will it take a $200 investment to double if it...

-

Suppose a supplier gives you a price on a contract and then later comes back and claims that he mistakenly wrote down the wrong price. Do you have the right to sue the supplier over breach of...

-

A worker pushes a crate and the net force is 200. N. If the crate moves with an acceleration of 0.750 m/s, what is its (a) mass and (b) weight?

-

A- Evaluate fx'e-2dx ,using integration by parts method. B- Evaluate f(cos (5x) + cos(5x) sin (2x))dx. C- Evaluate f using trigonometric substitution method. dx x+2x+5

-

If the jobs displayed in Table 18.24 are processed using the earliestdue-date rule, what would be the lateness of job C? TABLE 18.24 Processing Times and Due Dates for Five Jobs Job C D E...

-

Consider the claim that the OCA criteria are self-fulfilling. In what ways might this benefit a country that joins the currency union even if it doesnt satisfy the OCA criteria before joining? In...

-

Table 3-1(14-1) in the text shows the percentage undervaluation or overvaluation in the Big Mac, based on exchange rates in July 2019. Go to the main data repository for the BigMac dataset at...

-

What provision of U.S. trade law was used by President Trump to apply a tariff on steel and aluminum? What provision of U.S. trade was used to justify the tariffs on goods imported from China? Do...

-

Why is the analyzing step of the process crucial to the success of a MedImmune proposal? In the discussion, draw students attention to the intersection of medical, legal, and social issues. Why is...

-

How does the Clinical Trial Application guide described in the example make the composing process for a new document easier? How is it informed by the evaluation process? What metaphors or analogies...

-

Review how the ACE process led to the improved message by answering the following questions. 1. What information in the revised version addresses the need for persuasion? 2. How does the email...

Study smarter with the SolutionInn App