Problem 3: Proving Gaussian NB is equivalent to logistic regression The lecture notes gives a proof...

Fantastic news! We've Found the answer you've been seeking!

Question:

Transcribed Image Text:

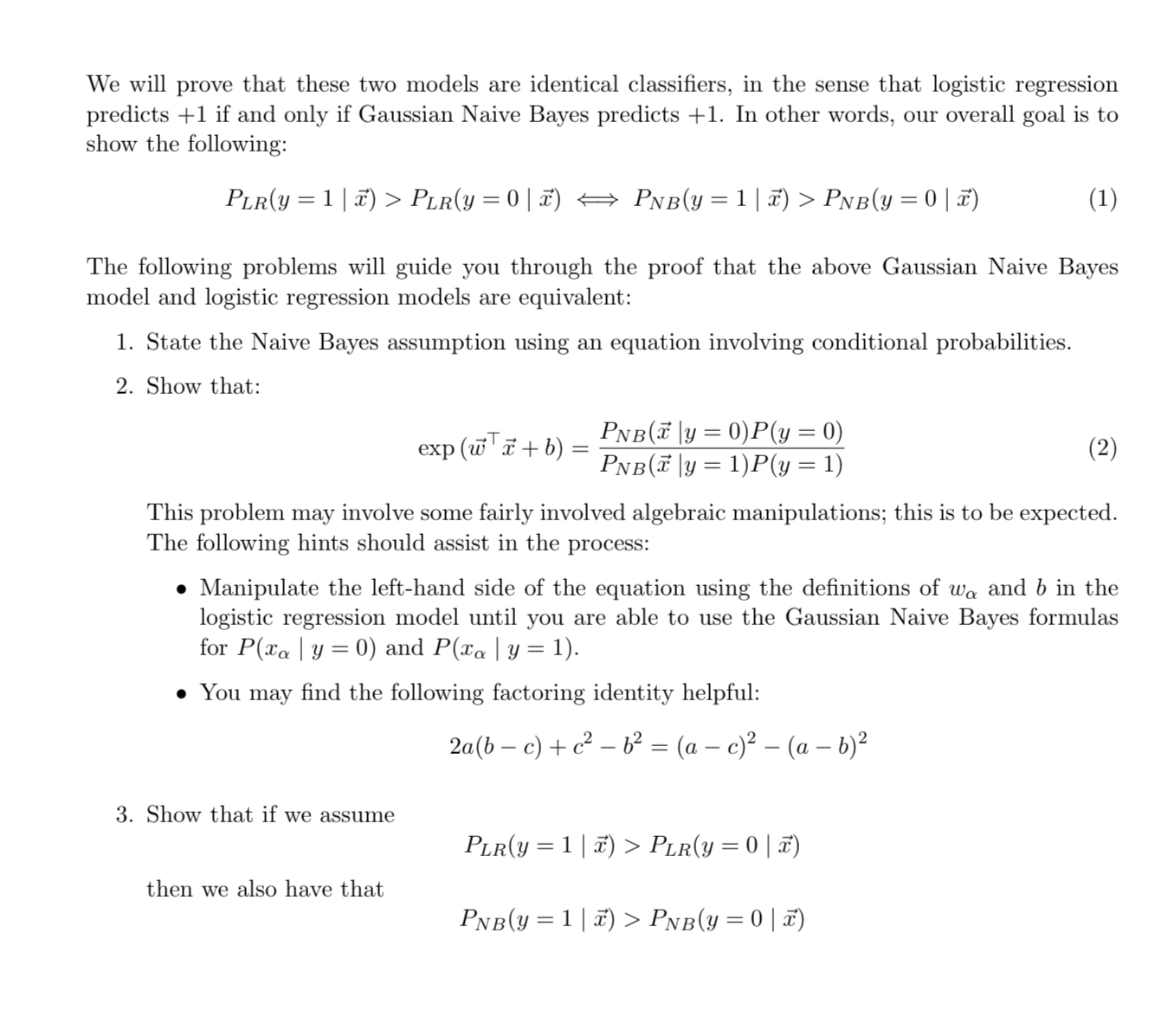

Problem 3: Proving Gaussian NB is equivalent to logistic regression The lecture notes gives a proof that multinomial Naive Bayes is equivalent to a linear classifier. In this problem, we will show that Gaussian Naive Bayes (GNB) produces the same model as logistic regression when the Naive Bayes assumptions hold. We will consider the following binary classification problem: Each input data point a has d features, denoted x, . . . , d, which are each real numbers. Each data point is in one of two classes, represented by y = 0 and y = 1. The Gaussian Naive Bayes or Logistic Regression models attempt to predict the class label y given the features . Consider a Gaussian Naive Bayes model as follows: The Gaussian Naive Bayes classifier predicts the class label for each of the data points by computing PNB (y = 0|x) and PNB(y = 17) and choosing the more likely label y given the features 7. For notational convenience, we define the following: For each feature xa and class c, there is a conditional distribution P(xa|y = c) = N (ac, 0). Equivalently, we have that P(xa\y= c) = + = P(y = 1) _ = P(y=0) 1 /2 exp(-(0 - te 1) 2 20 20 Note that each feature has a different variance o2. However, for a given feature, o2 is the same regardless of which class the data point is in. Consider a logistic regression model as follows: The logistic regression has parameters w and b, and the classifier selects the label correspond- ing to the greater of the following two probabilities: PLR(y = 1|x) PLR(y = 0 | x) = W = The values of the logistic regression parameters w and b are defined as follows in terms of the parameters in the Gaussian Naive Bayes model: Ha0 1 1+ exp (wx+b) exp (wx+b) 1+ exp(wi+ b) - Hal 0 Va [1,...,d] d _ d = in (=-) + - b ln + 20 a=1 .2 We will prove that these two models are identical classifiers, in the sense that logistic regression predicts +1 if and only if Gaussian Naive Bayes predicts +1. In other words, our overall goal is to show the following: PLR(y = 1 | x) > PLR(y = 0 | x) PNB(y = 1| x) > PnB(y = 0 | x) (1) The following problems will guide you through the proof that the above Gaussian Naive Bayes model and logistic regression models are equivalent: 1. State the Naive Bayes assumption using an equation involving conditional probabilities. 2. Show that: exp (wx + b) = = 3. Show that if we assume PNB(y=0)P(y = 0) PNB(7|y = 1)P(y = 1) This problem may involve some fairly involved algebraic manipulations; this is to be expected. The following hints should assist in the process: then we also have that Manipulate the left-hand side of the equation using the definitions of wo and b in the logistic regression model until you are able to use the Gaussian Naive Bayes formulas for P(xa | y = 0) and P(xa | y = 1). You may find the following factoring identity helpful: 2a(b c) + c b = (a c) (a b) (2) PLR(y = 1 | x) > PLR(y = 0 | x) PNB(y=1|x) > Pnb(y = 0 | x) Problem 3: Proving Gaussian NB is equivalent to logistic regression The lecture notes gives a proof that multinomial Naive Bayes is equivalent to a linear classifier. In this problem, we will show that Gaussian Naive Bayes (GNB) produces the same model as logistic regression when the Naive Bayes assumptions hold. We will consider the following binary classification problem: Each input data point a has d features, denoted x, . . . , d, which are each real numbers. Each data point is in one of two classes, represented by y = 0 and y = 1. The Gaussian Naive Bayes or Logistic Regression models attempt to predict the class label y given the features . Consider a Gaussian Naive Bayes model as follows: The Gaussian Naive Bayes classifier predicts the class label for each of the data points by computing PNB (y = 0|x) and PNB(y = 17) and choosing the more likely label y given the features 7. For notational convenience, we define the following: For each feature xa and class c, there is a conditional distribution P(xa|y = c) = N (ac, 0). Equivalently, we have that P(xa\y= c) = + = P(y = 1) _ = P(y=0) 1 /2 exp(-(0 - te 1) 2 20 20 Note that each feature has a different variance o2. However, for a given feature, o2 is the same regardless of which class the data point is in. Consider a logistic regression model as follows: The logistic regression has parameters w and b, and the classifier selects the label correspond- ing to the greater of the following two probabilities: PLR(y = 1|x) PLR(y = 0 | x) = W = The values of the logistic regression parameters w and b are defined as follows in terms of the parameters in the Gaussian Naive Bayes model: Ha0 1 1+ exp (wx+b) exp (wx+b) 1+ exp(wi+ b) - Hal 0 Va [1,...,d] d _ d = in (=-) + - b ln + 20 a=1 .2 We will prove that these two models are identical classifiers, in the sense that logistic regression predicts +1 if and only if Gaussian Naive Bayes predicts +1. In other words, our overall goal is to show the following: PLR(y = 1 | x) > PLR(y = 0 | x) PNB(y = 1| x) > PnB(y = 0 | x) (1) The following problems will guide you through the proof that the above Gaussian Naive Bayes model and logistic regression models are equivalent: 1. State the Naive Bayes assumption using an equation involving conditional probabilities. 2. Show that: exp (wx + b) = = 3. Show that if we assume PNB(y=0)P(y = 0) PNB(7|y = 1)P(y = 1) This problem may involve some fairly involved algebraic manipulations; this is to be expected. The following hints should assist in the process: then we also have that Manipulate the left-hand side of the equation using the definitions of wo and b in the logistic regression model until you are able to use the Gaussian Naive Bayes formulas for P(xa | y = 0) and P(xa | y = 1). You may find the following factoring identity helpful: 2a(b c) + c b = (a c) (a b) (2) PLR(y = 1 | x) > PLR(y = 0 | x) PNB(y=1|x) > Pnb(y = 0 | x)

Expert Answer:

Answer rating: 100% (QA)

To show that Gaussian Naive Bayes GNB produces the same model as logistic regression when the Naive Bayes assumptions hold we will compare the express... View the full answer

Related Book For

Artificial Intelligence A Modern Approach

ISBN: 9780134610993

4th Edition

Authors: Stuart Russell, Peter Norvig

Posted Date:

Students also viewed these programming questions

-

Chris and Jamie were shopping when Chris convinced Jamie to steal a pair of jeans by wearing them out of the store and putting the shorts she was wearing in her purse. As Chris and Jamie were leaving...

-

Data set: WingLength2 If you completed the helicopter research project, this series of questions will help you further investigate the use of regression to determine the optimal wing length of paper...

-

On January 1, 2024, the general ledger of Dynamite Fireworks includes the following account balances: Accounts Debit Cash Accounts Receivable Supplies Land Accounts Payable Common Stock Retained...

-

A steel spur pinion has 16 teeth cut on the 20 full-depth system with a module of 8 mm and a face width of 90 mm. The pinion rotates at 150 rev/min and transmits 6 kW to the mating steel gear. What...

-

Vortex shedding can be used to design a vortex flow meter (Fig. 6.34). A blunt rod stretched across the pipe sheds vortices whose frequency is read by the sensor downstream. Suppose the pipe diameter...

-

Figure 12.11 shows plots of monthly rates of return on three stocks versus the stock market index. (The plots are similar to those in Figure 12.2 but are taken from an earlier period.) The beta and...

-

In the third system of pulleys, 4 pulleys are arranged. Find the effort required to lift a load of 1 kN if the efficiency of the pulley system is 80%.

-

Consider the example of the Stackelberg model discussed in the text. Firms choose quantities, with firm A moving first, and then firm B. As in the text, market demand is given by Q 120 P and...

-

In multi-site manufacturing operations, what role does TPM play in standardizing maintenance practices and ensuring consistency across different plants or facilities? Discuss the challenges and...

-

T2125 - part 3B - line 3H - part 3C - line 8299 - part 4 - line 4A (all same value) Answer: Question 2 *BONUS T2125 - part 7 - line 7H subtotal of business-use-of-home expenses Answer: Question 3...

-

Suppose a venture's first cash flow is expected in Year 7, and the cash flow is expected to be 6,921,625. A comparable firm has earnings of 41,052,086 and a market cap of 315,832,311. Assuming a...

-

Complete the statements below regarding tax legislation. When enacting tax legislation, Congress is often guided by the concept of revenue neutrality so that any changes the net revenues raised under...

-

Based on the job sheets for the completed production campaigns, how much manufacturing overhead (MOH) was applied to all completed jobs during the year?

-

Go to the web site of your favorite Not-For-Profit organization. Review the site including their mission, services provided, how they raise funding, and their financial reports (if available.) Share...

-

5. Suppose you can earn 10% interest compounded annually. How much would you need to invest today (assume it's January 31, 2018) in order to have $1,000 on January 31, 2026? ANSWER ANSWER F N Suppose...

-

How do Ethics Expectations affect Reporting, Roughly only 37% of companies say their organization provides ongoing performance reporting or key performance indicators on this area. True or False, It...

-

A 43-year-old patient who suffers from severe intermittent vertigo has been definitively diagnosed with Menieres disease. After a year of various treatments, medications, tests, and...

-

F.(3e* -2x 3 sin(2x)) is equal to 2 3 Cos 8. IT 3, t (4+@ 2 3, 1+o 1 4 Cos 4 4 1 3. 1 +4cos V7 (1+o 4 1 4 Cos 4 1+0 4-

-

Consider a Bayes net over the random variables A, B, C, D, E with the structure shown in Figure S13.2, with full joint distribution P(A, B, C, D, E). a. Consider the marginal distribution P(A, B, D,...

-

Consider a probability model P(X, Y, Z, E), where Z is a single query variable and evidence E = e is given. A basic Monte Carlo algorithm generates N samples (ideally) from P(X, Y, Z | E = e) and...

-

Formulate each of the following as probability models, stating all your assumptions, and use the probability model to answer the questions. a. Aliens can be friendly or not; 75% are friendly....

-

The United States brought a lawsuit requesting forfeiture of a \($38.5\) million jet purchased by Teodoro Nguema Obiang Mangue (Nguema) because the government believed the jet had been purchased with...

-

Jose Medellin participated in the rape and murder of two teenage girls in Houston, Texas. He was arrested and read his Miranda rights, and he confessed. At the time, he was not informed that he could...

-

OBB Personenverkehr (OBB), the Austrian state-owned railway, carries about 235 million passengers a year on routes within wholly owned by OBB Holding Group, which in turn, is wholly owned by the...

Study smarter with the SolutionInn App