In a certain year, there were 80 days with measurable snowfall in Denver, and 63 days with

Question:

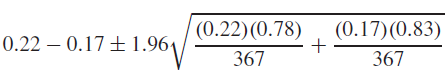

In a certain year, there were 80 days with measurable snowfall in Denver, and 63 days with measurable snowfall in Chicago. A meteorologist computes (80 + 1)/(365 + 2) = 0.22, (63 + 1)/(365 + 2) = 0.17, and proposes to compute a 95% confidence interval for the difference between the proportions of snowy days in the two cities as follows:

Is this a valid confidence interval? Explain.

Fantastic news! We've Found the answer you've been seeking!

Step by Step Answer:

Related Book For

Question Posted: