Question: 1.2 (17 points) Derive Gradient Given a training dataset Straining = [(x,y),i = 1...... we wish to optimize the negative log-likelihood loss Ciw.b) of the

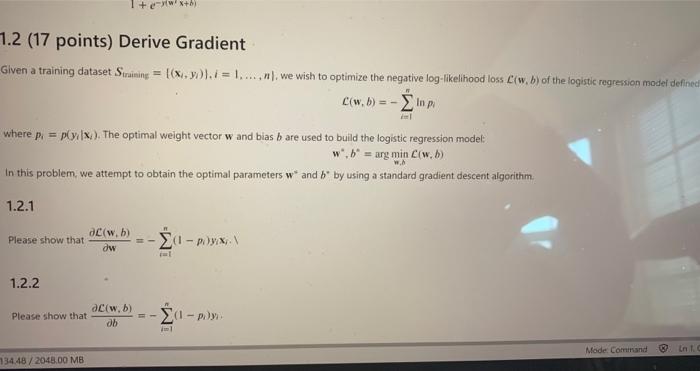

1.2 (17 points) Derive Gradient Given a training dataset Straining = [(x,y),i = 1...... we wish to optimize the negative log-likelihood loss Ciw.b) of the logistic regression model defined C(w, 6) =- where p = pylx). The optimal weight vector w and bias b are used to build the logistic regression model w , b = arg min C(w.) In this problem we attempt to obtain the optimal parameters wand b* by using a standard gradient descent algorithm 1.2.1 ac(w.b) Please show that w 1 - )yx, . 1.2.2 Please show that aciw.b) db 1 - )y). Mode Comirand int 134.48 / 2048.00 MB 1.2 (17 points) Derive Gradient Given a training dataset Straining = [(x,y),i = 1...... we wish to optimize the negative log-likelihood loss Ciw.b) of the logistic regression model defined C(w, 6) =- where p = pylx). The optimal weight vector w and bias b are used to build the logistic regression model w , b = arg min C(w.) In this problem we attempt to obtain the optimal parameters wand b* by using a standard gradient descent algorithm 1.2.1 ac(w.b) Please show that w 1 - )yx, . 1.2.2 Please show that aciw.b) db 1 - )y). Mode Comirand int 134.48 / 2048.00 MB

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts