2. (35 points) Effect of constraints on optimal solutions. A key result that optimal classifiers pick...

Fantastic news! We've Found the answer you've been seeking!

Question:

Transcribed Image Text:

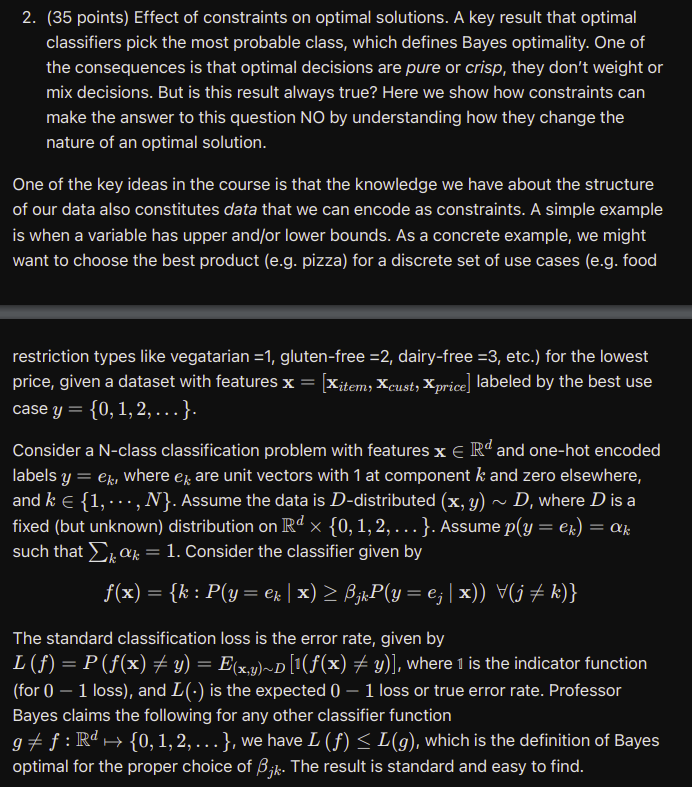

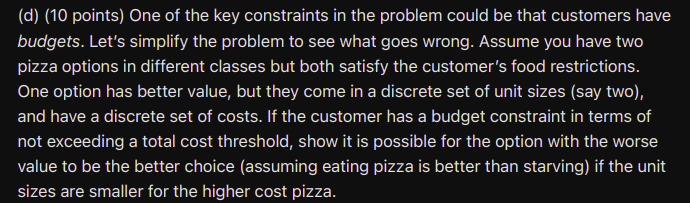

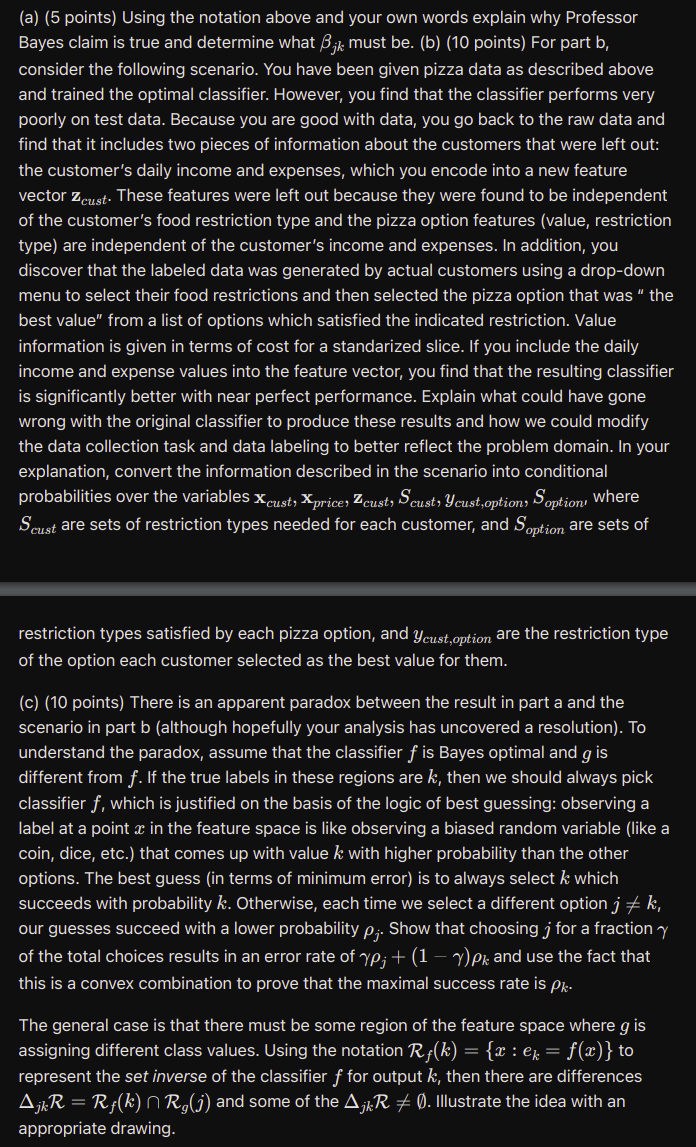

2. (35 points) Effect of constraints on optimal solutions. A key result that optimal classifiers pick the most probable class, which defines Bayes optimality. One of the consequences is that optimal decisions are pure or crisp, they don't weight or mix decisions. But is this result always true? Here we show how constraints can make the answer to this question NO by understanding how they change the nature of an optimal solution. One of the key ideas in the course is that the knowledge we have about the structure of our data also constitutes data that we can encode as constraints. A simple example is when a variable has upper and/or lower bounds. As a concrete example, we might want to choose the best product (e.g. pizza) for a discrete set of use cases (e.g. food restriction types like vegatarian =1, gluten-free =2, dairy-free =3, etc.) for the lowest price, given a dataset with features x = [Xitem, Xcust, Xprice] labeled by the best use case y = {0, 1, 2, . }. Consider a N-class classification problem with features x Rd and one-hot encoded labels y = ek, where ex are unit vectors with 1 at component k and zero elsewhere, and k = {1, ..., N}. Assume the data is D-distributed (x, y) ~ D, where D is a fixed (but unknown) distribution on Rd {0, 1, 2, }. Assume p(y = ek) = k such that kak = 1. Consider the classifier given by f(x) = {k : P(y = k | x) jkP(y = ej | x)) V(j k)} The standard classification loss is the error rate, given by L () = P (f(x) y) = E(x,y)~D [1(f(x) y)], where 1 is the indicator function (for 0 - 1 loss), and L(.) is the expected 0 - 1 loss or true error rate. Professor Bayes claims the following for any other classifier function g: Rd {0, 1, 2, ... }, we have L () L (g), which is the definition of Bayes optimal for the proper choice of Bk. The result is standard and easy to find. (d) (10 points) One of the key constraints in the problem could be that customers have budgets. Let's simplify the problem to see what goes wrong. Assume you have two pizza options in different classes but both satisfy the customer's food restrictions. One option has better value, but they come in a discrete set of unit sizes (say two), and have a discrete set of costs. If the customer has a budget constraint in terms of not exceeding a total cost threshold, show it is possible for the option with the worse value to be the better choice (assuming eating pizza is better than starving) if the unit sizes are smaller for the higher cost pizza. (a) (5 points) Using the notation above and your own words explain why Professor Bayes claim is true and determine what 3jk must be. (b) (10 points) For part b, consider the following scenario. You have been given pizza data as described above and trained the optimal classifier. However, you find that the classifier performs very poorly on test data. Because you are good with data, you go back to the raw data and find that it includes two pieces of information about the customers that were left out: the customer's daily income and expenses, which you encode into a new feature vector Zcust. These features were left out because they were found to be independent of the customer's food restriction type and the pizza option features (value, restriction type) are independent of the customer's income and expenses. In addition, you discover that the labeled data was generated by actual customers using a drop-down menu to select their food restrictions and then selected the pizza option that was "the best value" from a list of options which satisfied the indicated restriction. Value information is given in terms of cost for a standarized slice. If you include the daily income and expense values into the feature vector, you find that the resulting classifier is significantly better with near perfect performance. Explain what could have gone wrong with the original classifier to produce these results and how we could modify the data collection task and data labeling to better reflect the problem domain. In your explanation, convert the information described in the scenario into conditional probabilities over the variables Xcust, Xprice, Zeust, Scust, Ycust,option, Soption, where Scust are sets of restriction types needed for each customer, and Soption are sets of restriction types satisfied by each pizza option, and Ycust,option are the restriction type of the option each customer selected as the best value for them. (c) (10 points) There is an apparent paradox between the result in part a and the scenario in part b (although hopefully your analysis has uncovered a resolution). To understand the paradox, assume that the classifier f is Bayes optimal and g is different from f. If the true labels in these regions are k, then we should always pick classifier f, which is justified on the basis of the logic of best guessing: observing a label at a point x in the feature space is like observing a biased random variable (like a coin, dice, etc.) that comes up with value k with higher probability than the other options. The best guess (in terms of minimum error) is to always select k which succeeds with probability k. Otherwise, each time we select a different option j k, our guesses succeed with a lower probability p;. Show that choosing j for a fraction y of the total choices results in an error rate of yp; + (1 -y)P and use the fact that this is a convex combination to prove that the maximal success rate is Pk. The general case is that there must be some region of the feature space where g is assigning different class values. Using the notation R(k) = {x : k = f(x)} to represent the set inverse of the classifier f for output k, then there are differences AjkR = R(k) | R,(j) and some of the A/R 0. Illustrate the idea with an appropriate drawing. 2. (35 points) Effect of constraints on optimal solutions. A key result that optimal classifiers pick the most probable class, which defines Bayes optimality. One of the consequences is that optimal decisions are pure or crisp, they don't weight or mix decisions. But is this result always true? Here we show how constraints can make the answer to this question NO by understanding how they change the nature of an optimal solution. One of the key ideas in the course is that the knowledge we have about the structure of our data also constitutes data that we can encode as constraints. A simple example is when a variable has upper and/or lower bounds. As a concrete example, we might want to choose the best product (e.g. pizza) for a discrete set of use cases (e.g. food restriction types like vegatarian =1, gluten-free =2, dairy-free =3, etc.) for the lowest price, given a dataset with features x = [Xitem, Xcust, Xprice] labeled by the best use case y = {0, 1, 2, . }. Consider a N-class classification problem with features x Rd and one-hot encoded labels y = ek, where ex are unit vectors with 1 at component k and zero elsewhere, and k = {1, ..., N}. Assume the data is D-distributed (x, y) ~ D, where D is a fixed (but unknown) distribution on Rd {0, 1, 2, }. Assume p(y = ek) = k such that kak = 1. Consider the classifier given by f(x) = {k : P(y = k | x) jkP(y = ej | x)) V(j k)} The standard classification loss is the error rate, given by L () = P (f(x) y) = E(x,y)~D [1(f(x) y)], where 1 is the indicator function (for 0 - 1 loss), and L(.) is the expected 0 - 1 loss or true error rate. Professor Bayes claims the following for any other classifier function g: Rd {0, 1, 2, ... }, we have L () L (g), which is the definition of Bayes optimal for the proper choice of Bk. The result is standard and easy to find. (d) (10 points) One of the key constraints in the problem could be that customers have budgets. Let's simplify the problem to see what goes wrong. Assume you have two pizza options in different classes but both satisfy the customer's food restrictions. One option has better value, but they come in a discrete set of unit sizes (say two), and have a discrete set of costs. If the customer has a budget constraint in terms of not exceeding a total cost threshold, show it is possible for the option with the worse value to be the better choice (assuming eating pizza is better than starving) if the unit sizes are smaller for the higher cost pizza. (a) (5 points) Using the notation above and your own words explain why Professor Bayes claim is true and determine what 3jk must be. (b) (10 points) For part b, consider the following scenario. You have been given pizza data as described above and trained the optimal classifier. However, you find that the classifier performs very poorly on test data. Because you are good with data, you go back to the raw data and find that it includes two pieces of information about the customers that were left out: the customer's daily income and expenses, which you encode into a new feature vector Zcust. These features were left out because they were found to be independent of the customer's food restriction type and the pizza option features (value, restriction type) are independent of the customer's income and expenses. In addition, you discover that the labeled data was generated by actual customers using a drop-down menu to select their food restrictions and then selected the pizza option that was "the best value" from a list of options which satisfied the indicated restriction. Value information is given in terms of cost for a standarized slice. If you include the daily income and expense values into the feature vector, you find that the resulting classifier is significantly better with near perfect performance. Explain what could have gone wrong with the original classifier to produce these results and how we could modify the data collection task and data labeling to better reflect the problem domain. In your explanation, convert the information described in the scenario into conditional probabilities over the variables Xcust, Xprice, Zeust, Scust, Ycust,option, Soption, where Scust are sets of restriction types needed for each customer, and Soption are sets of restriction types satisfied by each pizza option, and Ycust,option are the restriction type of the option each customer selected as the best value for them. (c) (10 points) There is an apparent paradox between the result in part a and the scenario in part b (although hopefully your analysis has uncovered a resolution). To understand the paradox, assume that the classifier f is Bayes optimal and g is different from f. If the true labels in these regions are k, then we should always pick classifier f, which is justified on the basis of the logic of best guessing: observing a label at a point x in the feature space is like observing a biased random variable (like a coin, dice, etc.) that comes up with value k with higher probability than the other options. The best guess (in terms of minimum error) is to always select k which succeeds with probability k. Otherwise, each time we select a different option j k, our guesses succeed with a lower probability p;. Show that choosing j for a fraction y of the total choices results in an error rate of yp; + (1 -y)P and use the fact that this is a convex combination to prove that the maximal success rate is Pk. The general case is that there must be some region of the feature space where g is assigning different class values. Using the notation R(k) = {x : k = f(x)} to represent the set inverse of the classifier f for output k, then there are differences AjkR = R(k) | R,(j) and some of the A/R 0. Illustrate the idea with an appropriate drawing.

Expert Answer:

Related Book For

Posted Date:

Students also viewed these algorithms questions

-

Planning is one of the most important management functions in any business. A front office managers first step in planning should involve determine the departments goals. Planning also includes...

-

QUIZ... Let D be a poset and let f : D D be a monotone function. (i) Give the definition of the least pre-fixed point, fix (f), of f. Show that fix (f) is a fixed point of f. [5 marks] (ii) Show that...

-

A billboard at the top of a building is being illuminated by a projection light (L) that is 103 feet from the base of the building as shown in the figure. Determine how tall the billboard if its...

-

Jefferson Animal Rescue is a private not-for-profit clinic and shelter for abandoned domesticated animals, chiefly dogs and cats. At the end of 2016, the organization had the following account...

-

John Smith and Jane Brody are assistant portfolio managers. The senior portfolio manager has asked them to consider the acquisition of one of two option-free bond issues with the following...

-

Why is bacterial decomposition important?

-

Homeport uses flexible budgets that are based on the following data: Prepare a flexible selling and administrative expenses budget for March 2013 for sales volumes of $400,000, $600,000, and$800,000....

-

The cost of debt for firm XYZ is 6%.It's tax rate is 40%. The cost of retained earnings is 12% and the cost of external common equity is 14%. Retained earnings is $5000. The target capital structure...

-

H ALL 1 2 3 2. Simple Matrix Summation Given an n x m matrix, a, where each a(i, j) is the value of the cell at the intersection of row i and column j, create another n x m matrix, b, using the...

-

The basket of goods representing the CPI was valued at 30,000 in the reference year of 2020. In 2021 the price of this basket increased to 3200. If the CPI was set at 100 in 2020 what was the value...

-

Explain, using relevant legislation and caselaw, contracting with a company. Detail what precautions you would advise anyone dealing with contracting a company to take to protect themselves

-

Fewer workers are in traditional, full-time stable jobs while a growing number are in so-called contingent jobs, such as temporary or on-call positions, or are treated as independent...

-

Under fixed-interval lot sizing, order sizes for component parts are determined based on which one of the following? Gross requirements - net requirements for a predetermined number of periods Net...

-

Should technical violations of probation and parole be used as a cause to re-incarcerate someone? Please justify your position and defend your answers with facts/data/research.

-

When starting a business there are various business structures that can be used. Identify the key legal features of a corporation and describe the advantages and disadvantages of a corporation for...

-

If a disease is said to be sex-linked, what pair of chromosomes must contain the gene responsible for the disease? 46th pair 21st pair 23rd pair 22nd pair

-

The following items were displayed in the statement of affairs for Lubbock Company: Fully secured liabilities ......... $90,000 Partially secured liabilities ....... 12,000 Unsecured liabilities...

-

Consider Problem 1, Set 3.6a. (a) Determine the optimality condition for CA/CB that will keep the optimum unchanged. (b) Determine the optimality ranges for CA and CB, assuming that the other...

-

Consider the following LP model: Maximize z = 5x1 + 2x2 + 3x3 Subject to X1 + 5x2 + 2x3 b1 X1 - 5x2 - 6x3 b2 X1, x2, x3 0 The following optimal tableau corresponds to specific values of b1 and b2:...

-

The Burroughs Garment Company manufactures men's shirts and women's blouses for Walmark Discount Stores. Walmark will accept all the production supplied by Burroughs. The production process includes...

-

How can environmental agents that do not cause gene mutations contribute to cancer?

-

An individual has the genotype Aa Bb Cc and makes an abnormal gamete with the genotype AaBc. Does this gamete violate the law of independent assortment or the law of segregation (or both)? Explain...

-

Marfan syndrome is a rare inherited human disorder characterized by unusually long limbs and digits plus defects in the heart (especially the aorta) and the eyes, among other symptoms. Following is a...

Study smarter with the SolutionInn App