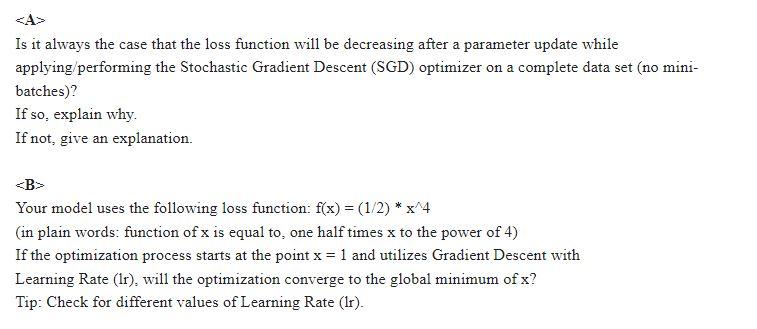

Question: A Is it always the case that the loss function will be decreasing after a parameter update while applying/performing the Stochastic Gradient Descent (SGD) optimizer

A Is it always the case that the loss function will be decreasing after a parameter update while applying/performing the Stochastic Gradient Descent (SGD) optimizer on a complete data set (no minibatches)? If so, explain why. If not, give an explanation. B> Your model uses the following loss function: f(x)=(1/2)x4 (in plain words: function of x is equal to, one half times x to the power of 4 ) If the optimization process starts at the point x=1 and utilizes Gradient Descent with Learning Rate ( 1r ), will the optimization converge to the global minimum of x ? Tip: Check for different values of Learning Rate (1r)

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts