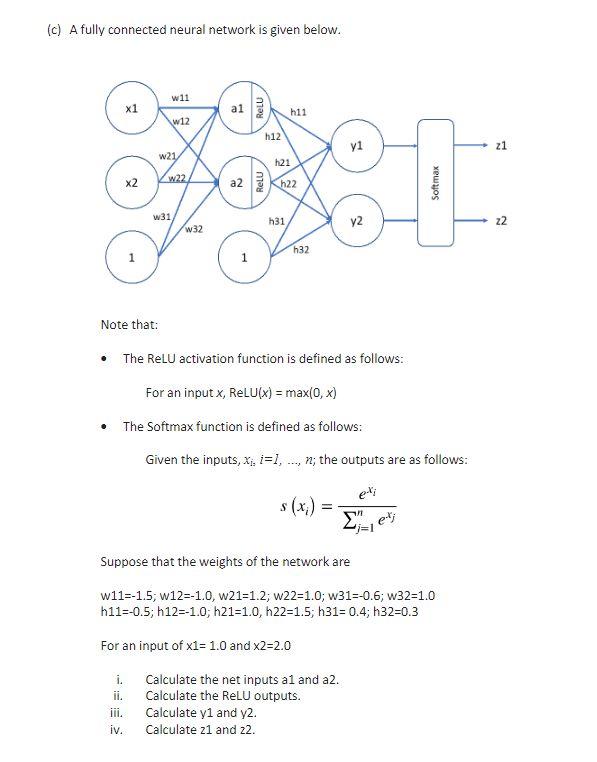

Question: (c) A fully connected neural network is given below. Note that: - The ReLU activation function is defined as follows: For an input x,ReLU(x)=max(0,x) -

(c) A fully connected neural network is given below. Note that: - The ReLU activation function is defined as follows: For an input x,ReLU(x)=max(0,x) - The Softmax function is defined as follows: Given the inputs, x1,i=1,,n; the outputs are as follows: s(xi)=j=1nexjexi Suppose that the weights of the network are w11=1.5;w12=1.0,w21=1.2;w22=1.0;w31=0.6;w32=1.0h11=0.5;h12=1.0;h21=1.0,h22=1.5;h31=0.4;h32=0.3 For an input of 1=1.0 and 2=2.0

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts