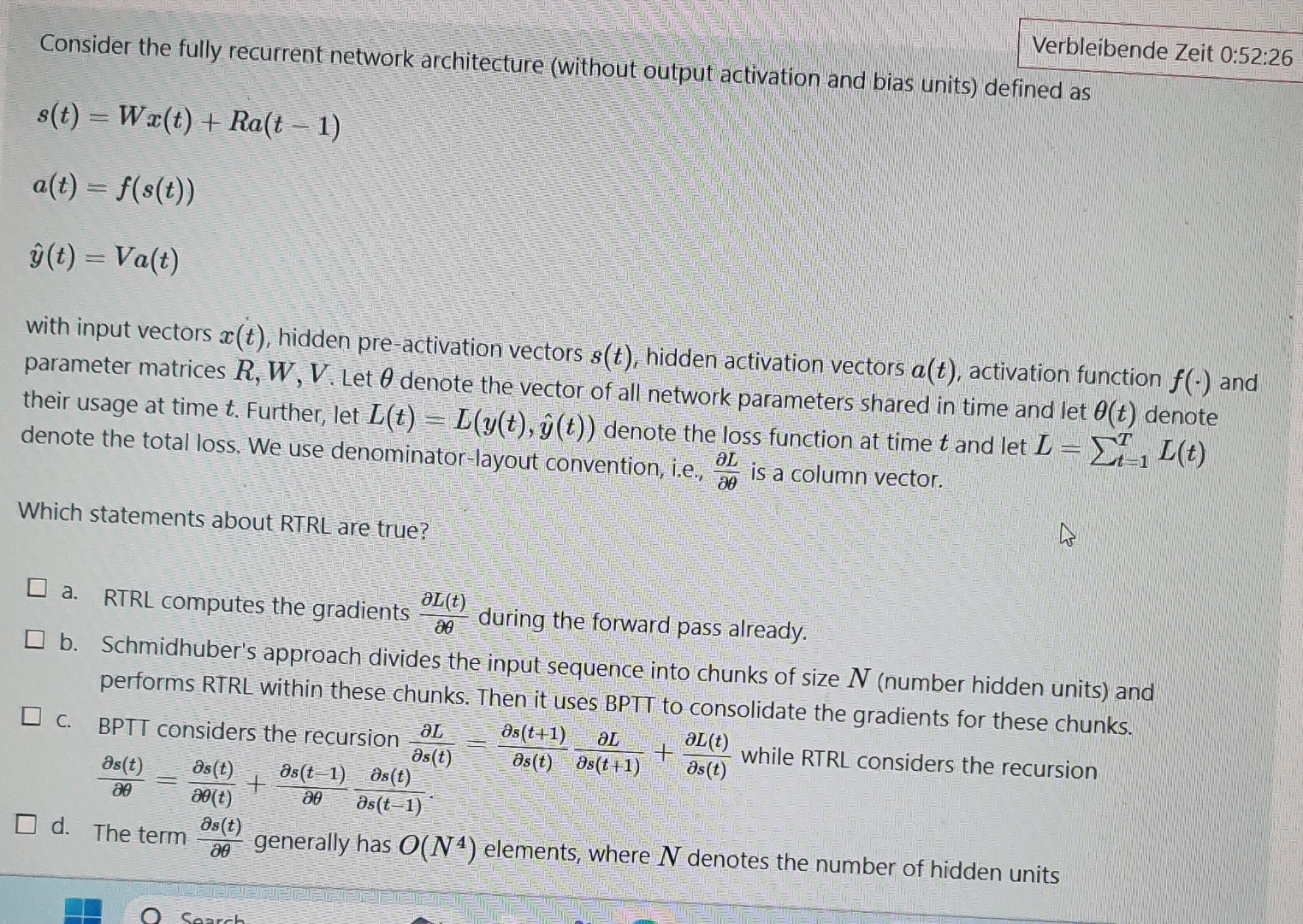

Question: Consider the fully recurrent network architecture ( without output activation and bias units ) defined as s ( t ) = W x ( t

Consider the fully recurrent network architecture without output activation and bias units defined as

hat

with input vectors hidden preactivation vectors hidden activation vectors activation function and parameter matrices Let denote the vector of all network parameters shared in time and let denote their usage at time Further, let hat denote the loss function at time and let denote the total loss. We use denominatorlayout convention, ie is a column vector.

Which statements about RTRL are true?

a RTRL computes the gradients during the forward pass already.

b Schmidhuber's approach divides the input sequence into chunks of size number hidden units and performs RTRL within these chunks. Then it uses BPTT to consolidate the gradients for these chunks.

c BPTT considers the recursion while RTRL considers the recursion

d The term generally has elements, where denotes the number of hidden units

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock