Consider the reinforcement learning problem posed by the gridworld example shown in Figure 5, and assume...

Fantastic news! We've Found the answer you've been seeking!

Question:

Transcribed Image Text:

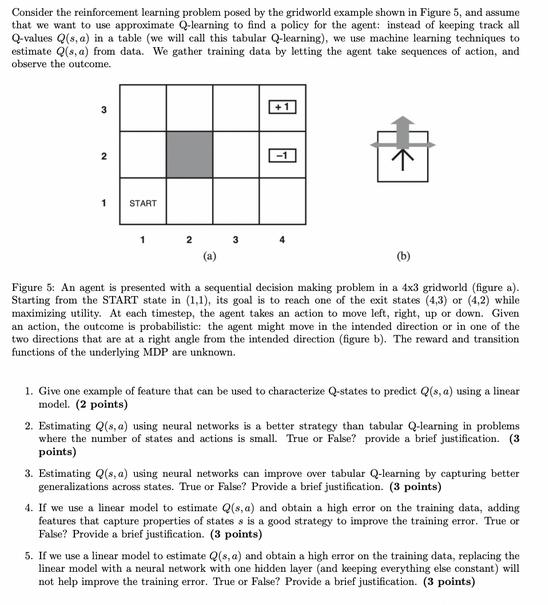

Consider the reinforcement learning problem posed by the gridworld example shown in Figure 5, and assume that we want to use approximate Q-learning to find a policy for the agent: instead of keeping track all Q-values Q(s, a) in a table (we will call this tabular Q-learning), we use machine learning techniques to estimate Q(s, a) from data. We gather training data by letting the agent take sequences of action, and observe the outcome. 3 2 1 START 1 2 (a) 3 Figure 5: An agent is presented with a sequential decision making problem in a 4x3 gridworld (figure a). Starting from the START state in (1,1), its goal is to reach one of the exit states (4,3) or (4,2) while maximizing utility. At each timestep, the agent takes an action to move left, right, up or down. Given an action, the outcome is probabilistic: the agent might move in the intended direction or in one of the two directions that are at a right angle from the intended direction (figure b). The reward and transition functions of the underlying MDP are unknown. 1. Give one example of feature that can be used to characterize Q-states to predict Q(s, a) using a linear model. (2 points) 2. Estimating Q(s, a) using neural networks is a better strategy than tabular Q-learning in problems where the number of states and actions is small. True or False? provide a brief justification. (3 points) 3. Estimating Q(s, a) using neural networks can improve over tabular Q-learning by capturing better generalizations across states. True or False? Provide a brief justification. (3 points) 4. If we use a linear model to estimate Q(s, a) and obtain a high error on the training data, adding features that capture properties of states s is a good strategy to improve the training error. True or False? Provide a brief justification. (3 points) 5. If we use a linear model to estimate Q(s, a) and obtain a high error on the training data, replacing the linear model with a neural network with one hidden layer (and keeping everything else constant) will not help improve the training error. True or False? Provide a brief justification. (3 points) Consider the reinforcement learning problem posed by the gridworld example shown in Figure 5, and assume that we want to use approximate Q-learning to find a policy for the agent: instead of keeping track all Q-values Q(s, a) in a table (we will call this tabular Q-learning), we use machine learning techniques to estimate Q(s, a) from data. We gather training data by letting the agent take sequences of action, and observe the outcome. 3 2 1 START 1 2 (a) 3 Figure 5: An agent is presented with a sequential decision making problem in a 4x3 gridworld (figure a). Starting from the START state in (1,1), its goal is to reach one of the exit states (4,3) or (4,2) while maximizing utility. At each timestep, the agent takes an action to move left, right, up or down. Given an action, the outcome is probabilistic: the agent might move in the intended direction or in one of the two directions that are at a right angle from the intended direction (figure b). The reward and transition functions of the underlying MDP are unknown. 1. Give one example of feature that can be used to characterize Q-states to predict Q(s, a) using a linear model. (2 points) 2. Estimating Q(s, a) using neural networks is a better strategy than tabular Q-learning in problems where the number of states and actions is small. True or False? provide a brief justification. (3 points) 3. Estimating Q(s, a) using neural networks can improve over tabular Q-learning by capturing better generalizations across states. True or False? Provide a brief justification. (3 points) 4. If we use a linear model to estimate Q(s, a) and obtain a high error on the training data, adding features that capture properties of states s is a good strategy to improve the training error. True or False? Provide a brief justification. (3 points) 5. If we use a linear model to estimate Q(s, a) and obtain a high error on the training data, replacing the linear model with a neural network with one hidden layer (and keeping everything else constant) will not help improve the training error. True or False? Provide a brief justification. (3 points)

Expert Answer:

Answer rating: 100% (QA)

The image presents a reinforcement learning scenario involving an agent in a 4x3 gridworld The agents goal is to reach one of the exit states with positive 1 or negative 1 rewards starting from the st... View the full answer

Related Book For

Posted Date:

Students also viewed these algorithms questions

-

Planning is one of the most important management functions in any business. A front office managers first step in planning should involve determine the departments goals. Planning also includes...

-

Assume that we want to use the sample data from Exercise 1 to test the claim that the sample is from a population with a standard deviation less than 1.8 min; we will use a 0.05 significance level to...

-

How does the trade-off between decision management and decision control affect the form that an absorption cost system takes within a particular firm?

-

Figure 7.7 on page 236 shows that the Congressional Budget Office forecasts that only about 10 percent of future increases in spending on Medicare as a percentage of GDP will be due to the aging of...

-

A researcher conducts an experiment comparing two treatment conditions with 20 scores in each treatment condition. a. If an independent-measures design is used, how many subjects are needed for the...

-

What roles do code review and testing play in quality assurance? Compare and contrast.

-

Ekman Company issued $1,000,000, 10-year bonds and agreed to make annual sinking fund deposits of $78,000. The deposits are made at the end of each year into an account paying 5% annual interest....

-

You write one Baltimore Cloud Inc. August 120 call option contract for a premium of $4.58. You hold the position until the expiration date when Baltimore Cloud stock sells for $99.44 per share....

-

What is each shareholder's realized gain or loss? b. What is each shareholder's recognized gain or loss? c. What is each shareholder's basis in their stock? When does their holding period begin? d....

-

Figure 1: Cash schedule for a whole life plan: guaranteed and projected 3. Basic Plan - Illustration Summary SURRENDER VALUE DEATH BENEFIT Guaranteed Non-Guaranteed Non-Guaranteed End of Policy Year...

-

An alpha particle has 8.00 10 16 J of electrical potential energy when it is at a position, A, from a negative point charge. If the alpha particle is moved to a position, B, where it has 9.60 10-16 J...

-

3 3 A ship floating in sea water (density =1020 kg/m displaces 1000m of water. What is the mass of the ship? O 6 1.02x 10 kg 5 5.1x10 kg 6 9.8x 10 kg 5 7.6x10 kg O O

-

An electrostatic air cleaner is a device that removes polluting particulates, thus purifying air. As an example, contaminated air carrying a particulate, with a mass of 3.15 109 kg, will first pass...

-

The current versus voltage behavior of a certain electrical device is shown in the Figure. When the potential difference ?across the device is 2 V, what is the resistance 3 K 0 Current (A) 1 0 1 2 3...

-

1. A pancake body rotates about a fixed origin. Relative to that origin, a point A at ra = a on the object has instantaneous velocity A = Voy. At the same instant, a point B at FB = a has...

-

The newest partner thinks that the firm might benefit the most from using Activity Based Costing. They have worked out the major components of the services but need assistance with determining the...

-

D Which of the following is considered part of the Controlling activity of managerial accounting? O Choosing to purchase raw materials from one supplier versus another O Choosing the allocation base...

-

Some studies have shown that there is a correlation between consumption of salt and blood pressure. As more salt is consumed, blood pressure tends to rise. A reporter reads one of these studies and...

-

One classic application of correlation involves the association between the temperature and the number of times a cricket chirps in a minute. Listed below are the numbers of chirps in 1 min and the...

-

Listed below are the conference designations of teams that won the Super Bowl, where N denotes a team from the NFC and A denotes a team from the AFC. Do the results suggest that either conference is...

-

Use the \(\gamma\)-matrices in the Weyl representation to show that the Dirac equation (14.31) is equivalent to Eq. (14.25). Data from Eq. 14.31 Data from Eq. 14.25 (y"Pu-m)(p) = (iy" - m)(p) = 0

-

Prove that the boosted right-handed spinor \(\psi_{\mathrm{R}}(\boldsymbol{p})\) is related to the corresponding rest spinor by Eq. (14.21).

-

Prove the identity \((\sigma \cdot \boldsymbol{p})^{2}=\mathrm{I}^{(2)} p^{2}\), where \(\sigma=\left(\sigma_{1}, \sigma_{2}, \sigma_{3} ight)\) are the Pauli matrices, \(\boldsymbol{p}\) is the...

Study smarter with the SolutionInn App