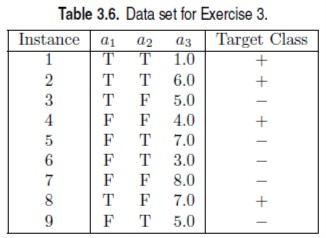

Question: Consider the training examples shown in Table 3.6 for a binary classification problem. Gini index = What is the entropy of this collection of training

Consider the training examples shown in Table 3.6 for a binary classification problem.

Gini index =

What is the entropy of this collection of training examples with respect to the class attribute? What are the information gains of a1 and a2 relative to these training examples? For a3, which is a continuous attribute, compute the information gain for every possible split. What is the best split (among a1, a2, and a3) according to the information gain? What is the best split (between a1 and a2) according to the misclassification error rate? What is the best split (between a1 and a2) according to the Gini index?

Table 3.6. Data set for Exercise 3 Instance! al a2 | Target Class T T L0 2 T T 6.0 3 T F 5.0 4 FF 4.0 5 F T 7.0 FT 3.0 FF 8.0 8 T F 7.0 F T 5.0

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts