Question: Exercise 4 ( 8 points ) Training a large language model depends on various architectural choices. However, recent papers such as Kaplan et al .

Exercise points

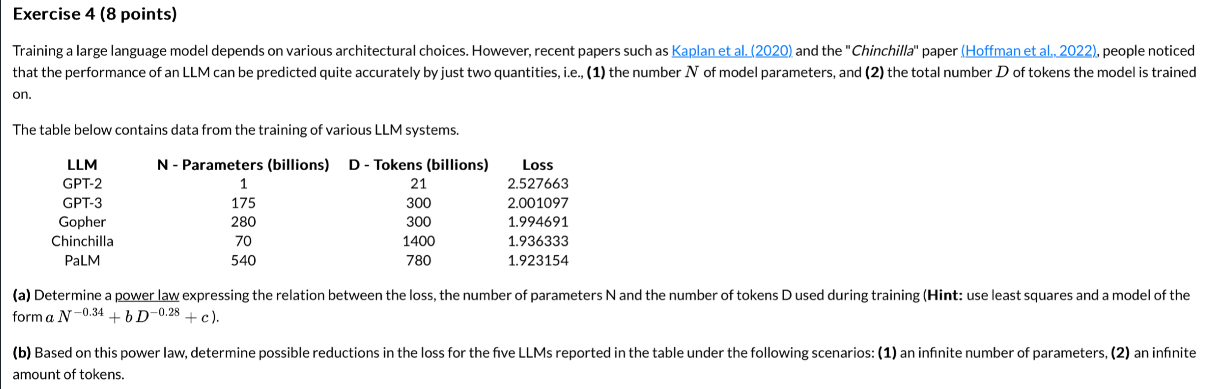

Training a large language model depends on various architectural choices. However, recent papers such as Kaplan et al and the "Chinchilla" paper Hoffman et al people noticed that the performance of an LLM can be predicted quite accurately by just two quantities, ie the number of model parameters, and the total number of tokens the model is trained on

The table below contains data from the training of various LLM systems.

tableLLM Parameters billions Tokens billionsLossGPTGPTGopherChinchillaPaLM

a Determine a power law expressing the relation between the loss, the number of parameters N and the number of tokens D used during training Hint: use least squares and a model of the form :

b Based on this power law, determine possible reductions in the loss for the five LLMs reported in the table under the following scenarios: an infinite number of parameters, an infinite amount of tokens.

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock