Question: HMM, simple substitution distance (SSD) [126], and opcode graph similarity (OGS) [118] scores for each of 40 malware samples and 40 benign samples can be

HMM, simple substitution distance (SSD) [126], and opcode graph similarity (OGS) [118] scores for each of 40 malware samples and 40 benign samples can be found in the file malwareBenignScores.txt at the textbook website. For each part of this problem, train an SVM using the first 20 malware samples and the first 20 benign samples in this dataset. Then test each SVM using the next 20 samples in the malware dataset and the next 20 samples in the benign dataset. In each case, determine the accuracy. a) Let ? = 1 and for the kernel function select the polynomial learning machine in equation (5.29) with ? = 2. b) Let ? = 3 and for the kernel function select the polynomial learning machine in equation (5.29) with ? = 2. c) Let ? = 1 and for the kernel function select the polynomial learning machine in equation (5.29) with ? = 4. d) Let ? = 3 and for the kernel function select the polynomial learning machine in equation (5.29) with ? = 4. 11. The Gaussian radial basis function (RBF) in equation (5.30) includes a shape parameter ?. For this problem, we want to consider the interaction between the RBF shape parameter ? and the SVM regularization parameter ?. Using the same data as in Problem 10, and the RBF kernel in equation (5.30), perform a grid search over each combination of ? ? {1, 2, 3, 4} and ? ? {2, 3, 4, 5}. That is, for each of these 16 test cases, train an SVM using the first 20 malware samples and the first 20 benign samples, then test the resulting SVM using the next 20 samples in each dataset. Determine the accuracy in each case and clearly indicate the best result obtained over this grid search. 12. For the sake of efficiency, we generally want to use fewer features when scoring, but we also do not want to significantly reduce the effectiveness of our classification technique. A method known as recursive feature 20In Section 7.6 of Chapter 7, we discuss various connections between SVM and PCA. elimination (RFE) [58] is one approach for reducing the number of features while minimizing any reduction in the accuracy of the resulting classifier.21 a) Train a linear SVM using the first 20 malware and the first 20 benign samples, based on the HMM, SSD, and OGS scores that are given in the dataset in Problem 10. Determine the accuracy based on the next 20 samples in each set, and list the SVM weights corresponding to each feature, namely, the HMM, SSD, and OGS scores. b) Perform RFE based on the data in Problem 10, and at each step, list the SVM weights and give the accuracy for the reduced model. Hint: Eliminate the feature with the lowest weight from part a), and recompute a linear SVM using the two remaining scores. Then, eliminate the feature with the lowest weight from this SVM and train a linear SVM using the one remaining feature. 13. Suppose that we have determined the parameters ?? that appear in the SVM training problem. How do we then determine the parameters ? and ? that appear in (5.33)? 14. At each iteration of the SSMO algorithm, as discussed in Section 5.5, we select an ordered pair of Lagrange multipliers (?? , ?? ), and we update this pair, while keeping all other ?? fixed. We use these updated values to modify ?, thus updating the scoring function ?(?). In this process, the intermediate values ?? in equation (5.38) and ?? in equation (5.39) are computed. Then ? is updated according to (5.40). Note that in these equations, ?? and ?? are the updated values, while ??? and ??? are the old values for these Lagrange multipliers, i.e., the values of ?? and ?? before they were updated in the current step of the algorithm. a) In the text it is claimed that whenever the updated pair (?? , ?? ) satisfies both 0

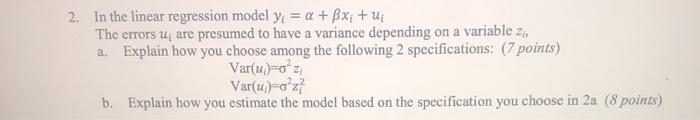

2. In the linear regression model y, = a + x + U The errors u are presumed to have a variance depending on a variable zi, a. Explain how you choose among the following 2 specifications: (7 points) Var(u) =o'zi Var(u)-o'z? b. Explain how you estimate the model based on the specification you choose in 2a (8 points)

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts