Question: How do dropout layers in neural networks help prevent overfitting? By randomly disabling a fraction of the neurons during training By randomly initializing network weights

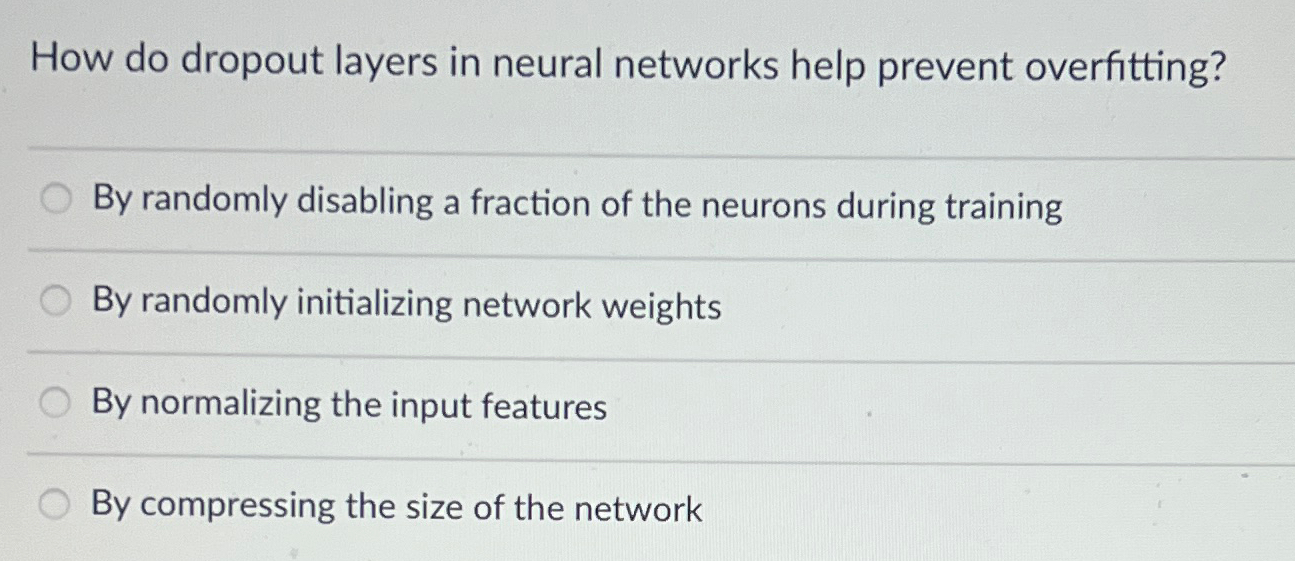

How do dropout layers in neural networks help prevent overfitting?

By randomly disabling a fraction of the neurons during training

By randomly initializing network weights

By normalizing the input features

By compressing the size of the network

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock