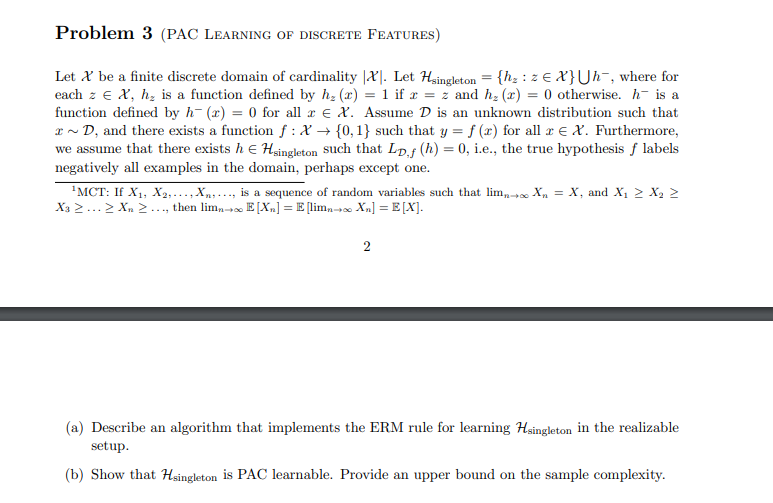

Question: Problem 3 (PAC LEARNING OF DISCRETE FEATURES) Let X be a finite discrete domain of cardinality (X). Let Hsingleton = {h, : 2 X}Uh-, where

Problem 3 (PAC LEARNING OF DISCRETE FEATURES) Let X be a finite discrete domain of cardinality (X). Let Hsingleton = {h, : 2 X}Uh-, where for each z e X, h, is a function defined by hz () = 1 if x = z and hz (x) = 0 otherwise. h is a function defined by h-(x) = 0 for all c E X. Assume D is an unknown distribution such that * D, and there exists a function f :X + {0,1} such that y = f (x) for all r e X. Furthermore, we assume that there exists h e Hsingleton such that Lp,f (h) = 0, i.e., the true hypothesis f labels negatively all examples in the domain, perhaps except one. MCT: If X1, X2, ..., Xn,..., is a sequence of random variables such that lim; X = X, and X, > X2 > X: > ... > Xn ..., then lima+ E[X-] = E [limn + Xn] =E[X]. 2 (a) Describe an algorithm that implements the ERM rule for learning Hsingleton in the realizable setup. (b) Show that Hsingleton is PAC learnable. Provide an upper bound on the sample complexity

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts