Question: Rewrite Listing 12.18, WebCrawler.java, to improve the performance by using appropriate new data structures for listOfPendingURLs and listofTraversedURLs. Suppose you have Java source files under

Rewrite Listing 12.18, WebCrawler.java, to improve the performance by using appropriate new data structures for listOfPendingURLs and listofTraversedURLs.

Suppose you have Java source files under the directories?chapter1,?chapter2, . . . ,?chapter34. Write a program to insert the statement?package chapteri;?as the first line for each Java source file under the directory?chapteri. Suppose?chapter1,?chapter2, . . . ,?chapter34?are under the root directory?srcRootDirectory. The root directory and?chapteri?directory may contain other folders and files. Use the following command to run the program:

java Exercise12_18 srcRootDirectory

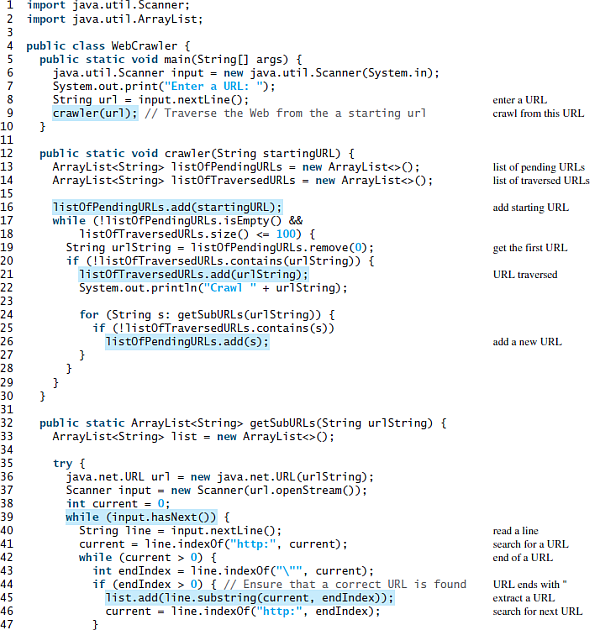

Listing?WebCrawler.java

![void main(String [] args) { java.util.Scanner input = new java.util.Scanner(System.in); System.out.print("Enter a](https://dsd5zvtm8ll6.cloudfront.net/si.experts.images/questions/2022/11/636a73b8b1ba0_824636a73b8a19ad.jpg)

import java.util.Scanner; 2 import java.util.ArrayList; 4 public class WebCrawler { public static void main(String [] args) { java.util.Scanner input = new java.util.Scanner(System.in); System.out.print("Enter a URL: "); String url = input.nextline(); crawler(url); / Traverse the Web from the a starting url enter a URL crawl from this URL 10 11 12 public static void crawler(String startingURL) { Arraylist list0fPendingURLS = new ArrayList (); ArrayList listofTraversedURLS = new ArrayList (); 13 list of pending URLS list of traversed URLSS 14 15 16 list0fPendingURLS.add(startingURL); while (!listOfPendingURLs.jsEmptyO && listofTraversedURLS.size() 0) { int endIndex = line.index0fC"\"", current); if (endIndex > 0) { // Ensure that a correct URL is found list.add(line.substring(current, endIndex)); current - line.index0f ("http:", endIndex); 35 36 37 38 39 40 read a line 41 search for a URL 42 end of a URL 43 44 URL ends with 45 46 extract a URL scarch for next URL. 47 1234 n67CO else 48 current = -1; 50 51 52 53 54 catch (Exception ex) { System.out.println("Error: + ex.getMessage()); 55 56 return URLS 57 58 return list; 59 }

Step by Step Solution

3.28 Rating (172 Votes )

There are 3 Steps involved in it

Program Plan Create a class WebCrawlerRev which is the revised version of WebCrawler class in listing 1218 This classes uses data structures like LinkedList and HashSet to improve the performance of t... View full answer

Get step-by-step solutions from verified subject matter experts