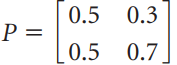

Question: Let be the transition matrix for a Markov chain with two states. Let be the initial state vector for the population. Compute x 1 and

be the transition matrix for a Markov chain with two states.

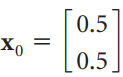

Let

be the initial state vector for the population.

Compute x1 and x2.

0.5 0.3 P = 0.5 0.7. 0.5 Xo 0.5

Step by Step Solution

3.33 Rating (165 Votes )

There are 3 Steps involved in it

X1 X Px0 05 05 ... View full answer

Get step-by-step solutions from verified subject matter experts