Question: 1.2. A Markov chain X, X, X,, . . . has the transition probability matrix Determine the conditional probabilities Pr{X, = 1, )(, = 1IX,

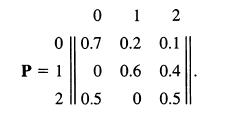

1.2. A Markov chain X, X, X,, . . . has the transition probability matrix

Determine the conditional probabilities Pr{X, = 1, )(, = 1IX, = 0) and Pr{X1 = 1, X, = 1IX = 01.

0 1 2 0 || 0.7 0.2 0.1 P = 1 0 0.6 0.4 2 || 0.5 0 0.5

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts