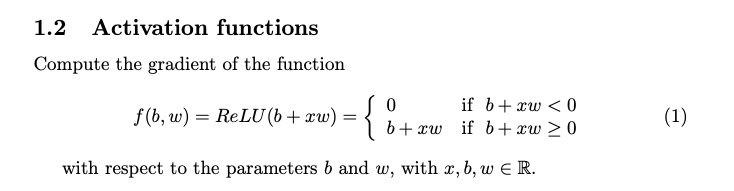

Question: 1 . 2 Activation functions Compute the gradient of the function f ( b , w ) = ReLU ( b + x w )

Activation functions

Compute the gradient of the function

ReLU

with respect to the parameters and with

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock