Question: 2. We have mainly focused on squared loss, but there are other interesting losses in data-mining. Consider the following loss function which we denote by

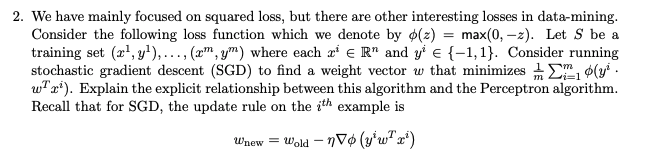

2. We have mainly focused on squared loss, but there are other interesting losses in data-mining. Consider the following loss function which we denote by 0(2) = max(0, -2). Let S be a training set (2, y),...,x,y) where each r ER" and y E{-1,1}. Consider running stochastic gradient descent (SGD) to find a weight vector w that minimizes 12 oly. wr). Explain the explicit relationship between this algorithm and the Perceptron algorithm. Recall that for SGD, the update rule on the ith example is Wnew = wold - 706 (y'w?:)

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock