Question: 4 Classification Suppose we have a classification problem with classes labeled 1,....c and an additional doubt category labeled c + l. Let r : Rd-,

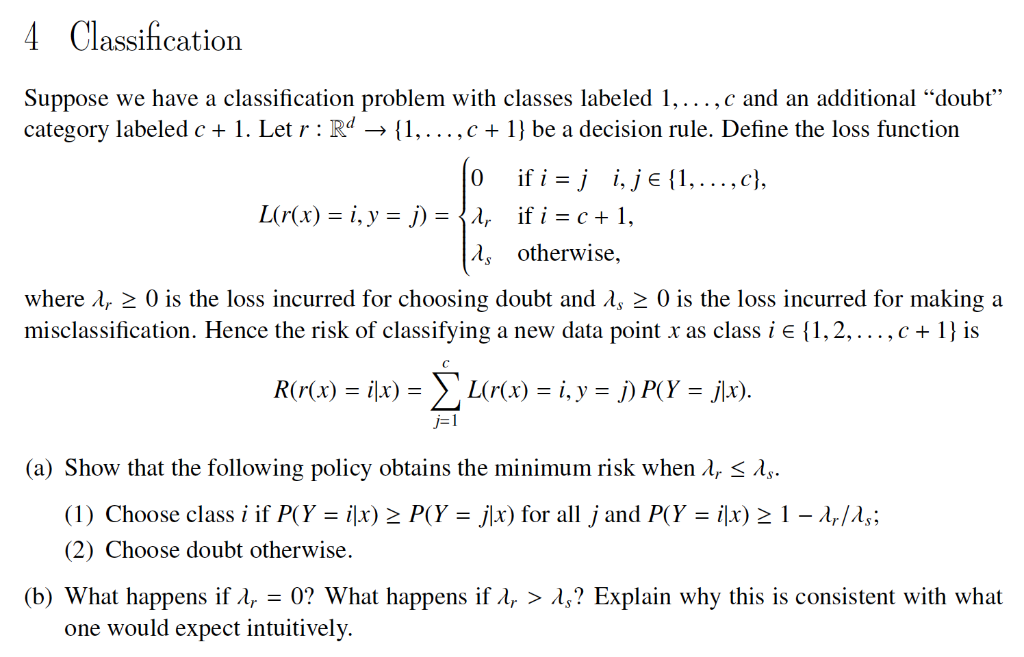

4 Classification Suppose we have a classification problem with classes labeled 1,....c and an additional "doubt" category labeled c + l. Let r : Rd-, { I, . . . , c + 1 } be a decision rule. Define the loss function otherwise, where , 0 is the loss incurred for choosing doubt and $ 2 0 is the loss incurred for making a misclassification. Hence the risk of classifying a new data point x as class i { 1, 2, . . . ,e+ 1 } is -I (a) Show that the following policy obtains the minimum risk when . 1, (1) Choose class i if P(Y-ix)2 P(Y-jl) for all j and P(Y = ilx) (2) Choose doubt otherwise. 1-4/1, ; (b) What happens if ,-0? What happens if., > s? Explain why this is consistent with what one would expect intuitively 4 Classification Suppose we have a classification problem with classes labeled 1,....c and an additional "doubt" category labeled c + l. Let r : Rd-, { I, . . . , c + 1 } be a decision rule. Define the loss function otherwise, where , 0 is the loss incurred for choosing doubt and $ 2 0 is the loss incurred for making a misclassification. Hence the risk of classifying a new data point x as class i { 1, 2, . . . ,e+ 1 } is -I (a) Show that the following policy obtains the minimum risk when . 1, (1) Choose class i if P(Y-ix)2 P(Y-jl) for all j and P(Y = ilx) (2) Choose doubt otherwise. 1-4/1, ; (b) What happens if ,-0? What happens if., > s? Explain why this is consistent with what one would expect intuitively

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts