Question: A less-popular alternative to batch and layer normalization is weight normalization, which replaces each weight vector w in the model with vv where is a

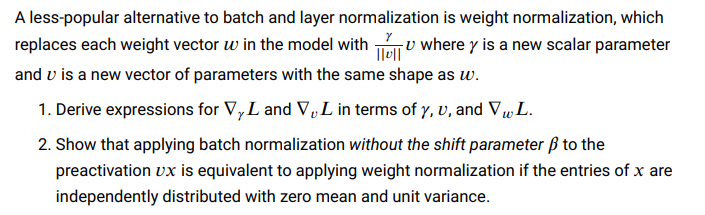

A less-popular alternative to batch and layer normalization is weight normalization, which replaces each weight vector w in the model with vv where is a new scalar parameter and v is a new vector of parameters with the same shape as w. 1. Derive expressions for L and vL in terms of ,v, and wL. 2. Show that applying batch normalization without the shift parameter to the preactivation vx is equivalent to applying weight normalization if the entries of x are independently distributed with zero mean and unit variance

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts