Create a tokenizer Write a function tokenize that takes a string and returns a list of tokens.

Question:

Create a tokenizer

Write a function tokenize that takes a string and returns a list of tokens.

[ ] deftokenize(doc): return

Calculate token scores

Calculate scores for every token in the corpus, using the method discussed in class. Store these scores in a dictionary called token_scores.

[ ] token_scores={}

Create a score message function

Write a function score_message that takes an SMS message doc and returns a SPAM score, using the method discussed in class.

[ ] defscore_message(doc):

return

- What tokens are most predictive of a message being SPAM? (coding)

- What tokens are most predictive of a message being HAM? (coding)

- How many documents are misclassified by the model?(coding)

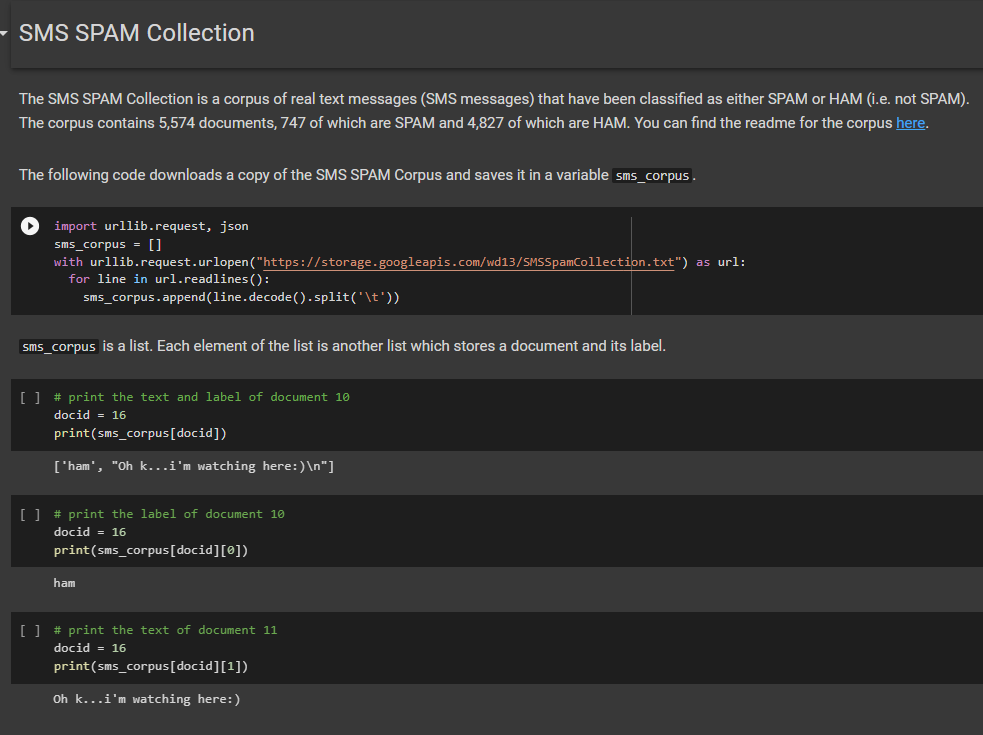

import urllib.request, json

sms_corpus = []

with urllib.request.urlopen("https://storage.googleapis.com/wd13/SMSSpamCollection.txt") as url:

for line in url.readlines():

sms_corpus.append(line.decode().split('t'))

# print the text and label of document 10

docid = 16

print(sms_corpus[docid])

# print the label of document 10

docid = 16

print(sms_corpus[docid][0])

# print the text of document 11

docid = 16

print(sms_corpus[docid][1])

- Can you get better results by improving your tokenizer?

Artificial Intelligence A Modern Approach

ISBN: 9780134610993

4th Edition

Authors: Stuart Russell, Peter Norvig