Question: Gradient descent 1 point possible (graded) Newton's method is a simple and effective way of minimizing a convex function. However, it requires the computation of

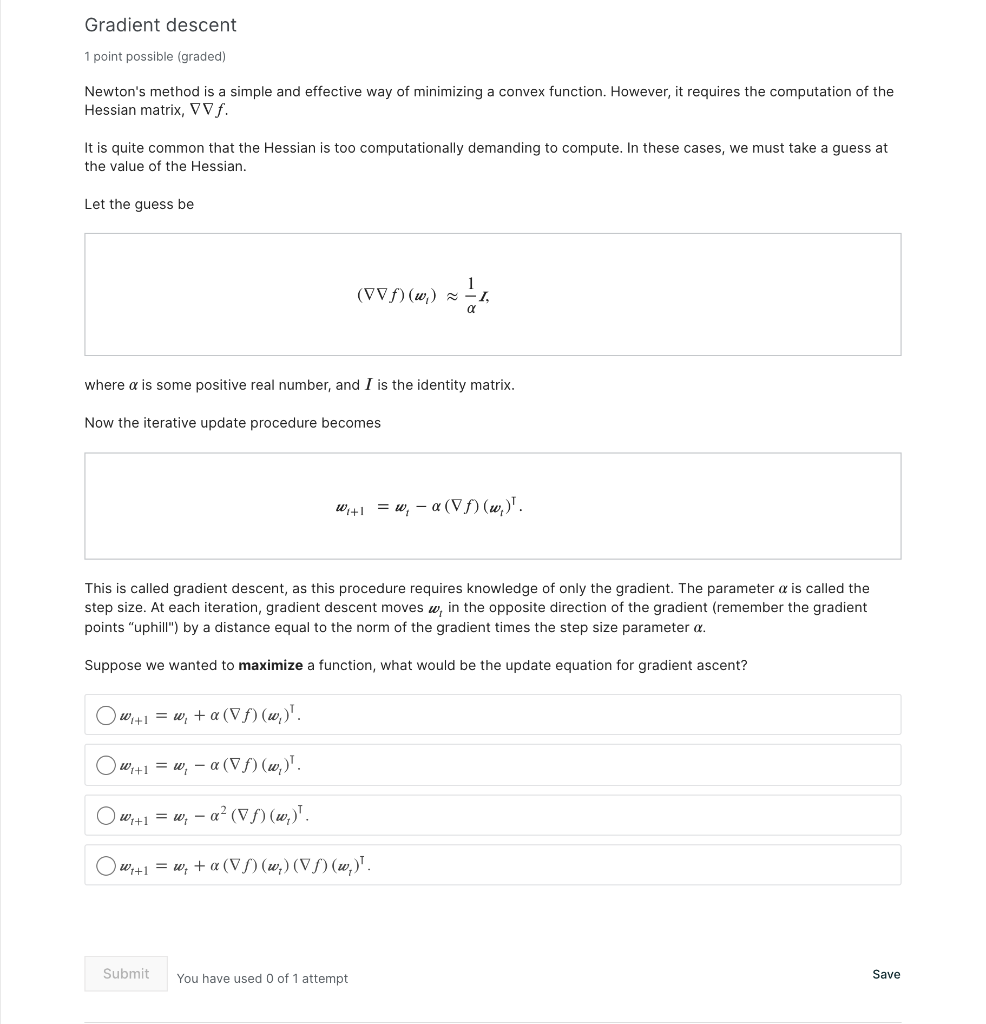

Gradient descent 1 point possible (graded) Newton's method is a simple and effective way of minimizing a convex function. However, it requires the computation of the Hessian matrix, VVf. It is quite common that the Hessian is too computationally demanding to compute. In these cases, we must take a guess at the value of the Hessian. Let the guess be (VVH)(w) x 1 where a is some positive real number, and I is the identity matrix. Now the iterative update procedure becomes W,+1 = w; - a((w)". This is called gradient descent, as this procedure requires knowledge of only the gradient. The parameter a is called the step size. At each iteration, gradient descent moves w, in the opposite direction of the gradient (remember the gradient points "uphill") by a distance equal to the norm of the gradient times the step size parameter a. Suppose we wanted to maximize a function, what would be the update equation for gradient ascent? w+1 = w, + a(V)(w)'. w+1 = w, a (Uf)(w)! Ow;+1 = w, a? (vf)(w)". wy+1 = w; +a (V)(w) (V)(w)". Submit Save You have used 0 of 1 attempt

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts