Question: Note that: The ReLU activation function is defined as follows: For an input x , ReLU ( x ) = max ( 0 , x

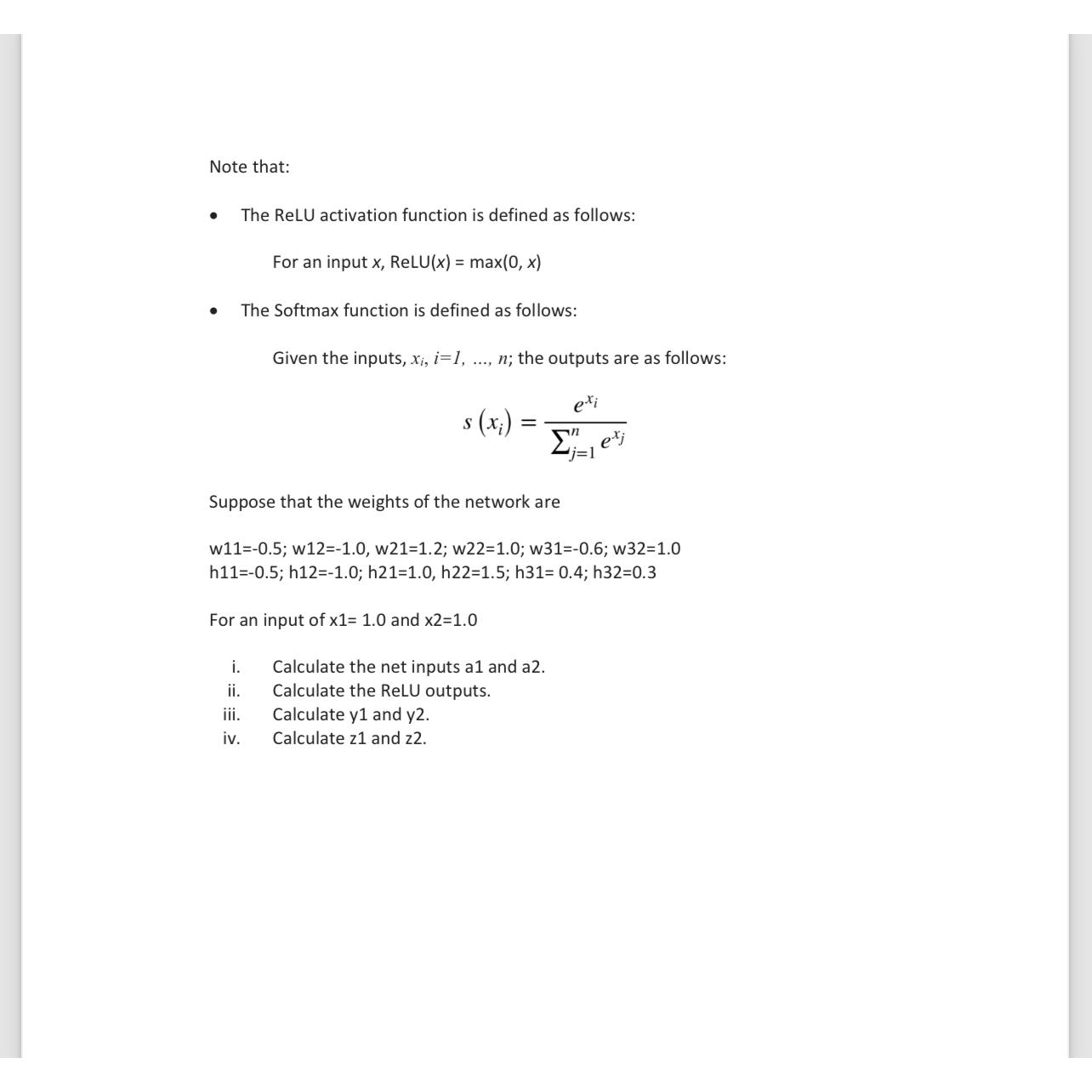

Note that:

The ReLU activation function is defined as follows:

For an input ReLUmax

The Softmax function is defined as follows:

Given the inputs, dots,; the outputs are as follows:

Suppose that the weights of the network are

; ; ; ;

; ; ; ;

For an input of and

i Calculate the net inputs a and a

ii Calculate the ReLU outputs.

iii. Calculate y and y

iv Calculate and

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock