Question: Stochastic gradient descent (SGD) is an important optimization method in machine learning, used everywhere from logistic regression to training neural networks. In this problem, you

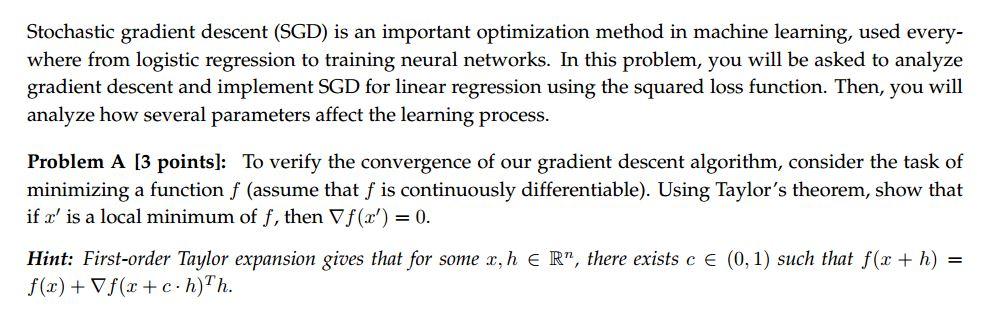

Stochastic gradient descent (SGD) is an important optimization method in machine learning, used everywhere from logistic regression to training neural networks. In this problem, you will be asked to analyze gradient descent and implement SGD for linear regression using the squared loss function. Then, you will analyze how several parameters affect the learning process. Problem A [3 points]: To verify the convergence of our gradient descent algorithm, consider the task of minimizing a function f (assume that f is continuously differentiable). Using Taylor's theorem, show that if x is a local minimum of f, then f(x)=0. Hint: First-order Taylor expansion gives that for some x,hRn, there exists c(0,1) such that f(x+h)= f(x)+f(x+ch)Th

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts