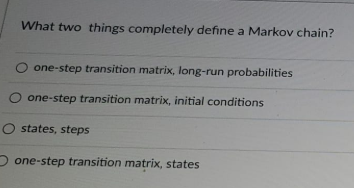

Question: What two things completely define a Markov chain? O one-step transition matrix, long-run probabilities one-step transition matrix, initial conditions O states, steps one-step transition matrix,

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock