Question: Why does dropout regularization reduce overfitting? A . ) it adds a penalty weight term B . ) it reduces codependency developing between two neurons

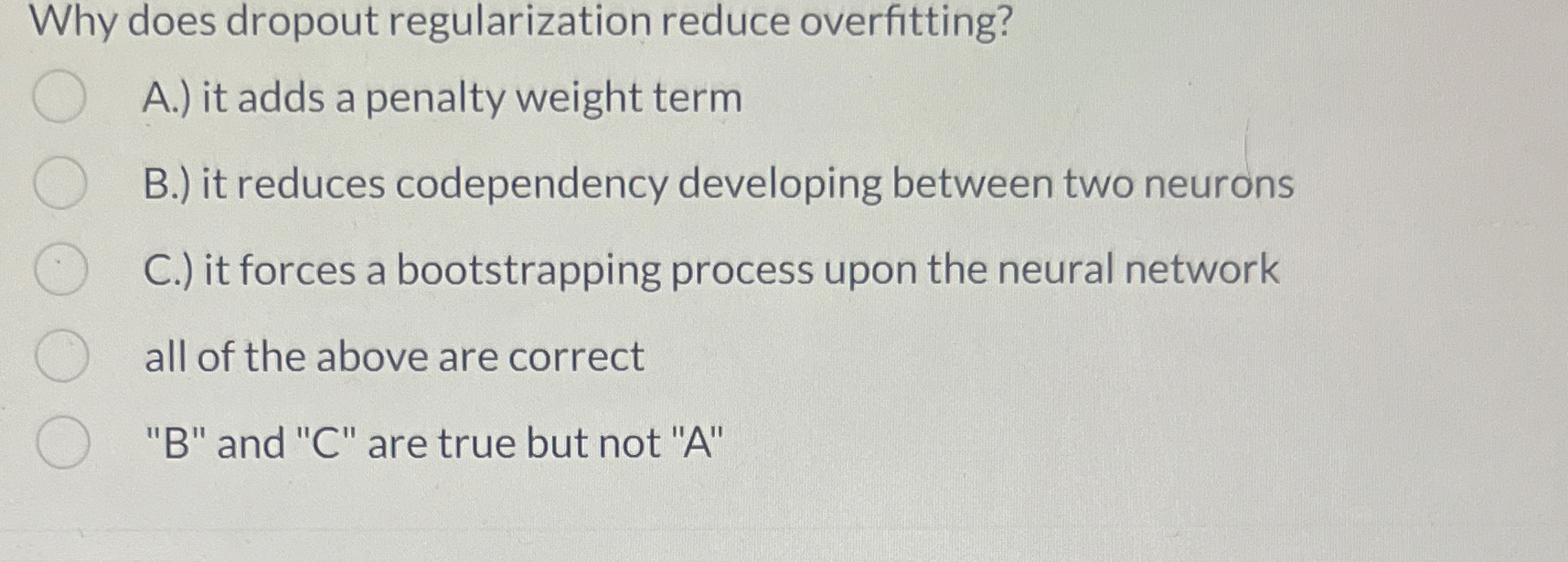

Why does dropout regularization reduce overfitting?

A it adds a penalty weight term

B it reduces codependency developing between two neurons

C it forces a bootstrapping process upon the neural network

all of the above are correct

B and C are true but not A

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock