Question: You can use the following code as a framework: import numpy as np from numpy import genfromtxt ### Question 1 ### # Answer Question 1

You can use the following code as a framework:

import numpy as np

from numpy import genfromtxt

### Question 1 ###

# Answer Question 1 by filling in '??' below with the appropriate code

# Load data

data = genfromtxt('LRTrain.csv', delimiter=',',skip_header = 1)

n = data.shape[0]

d = data.shape[1]-1

T = ?? # number of iterations

eps = ?? # tolerance

step = ?? # step size

# get feature data

x = data[:,0:d]

# get label data

y = data[:,d]

w = np.zeros(d)

## Define gradient function

def grad(w,x,y):

g = np.zeros(d)

for i in range(n):

g = g + ??

return (1)*g

## Define negative log-likelihood function

def fval(w,x,y):

v = 0;

for i in range(n):

v = v + ??

return (1)*v

## Define norm of the gradient

def gradnorm(w,x,y):

return np.linalg.norm(??)

## Perform gradient descent

for t in range(T):

w = ??

print("Step count: " + str(t) + ", Negative log likelihood: " + str(fval(w,x,y)))

# save final weight vector

w_hat = w

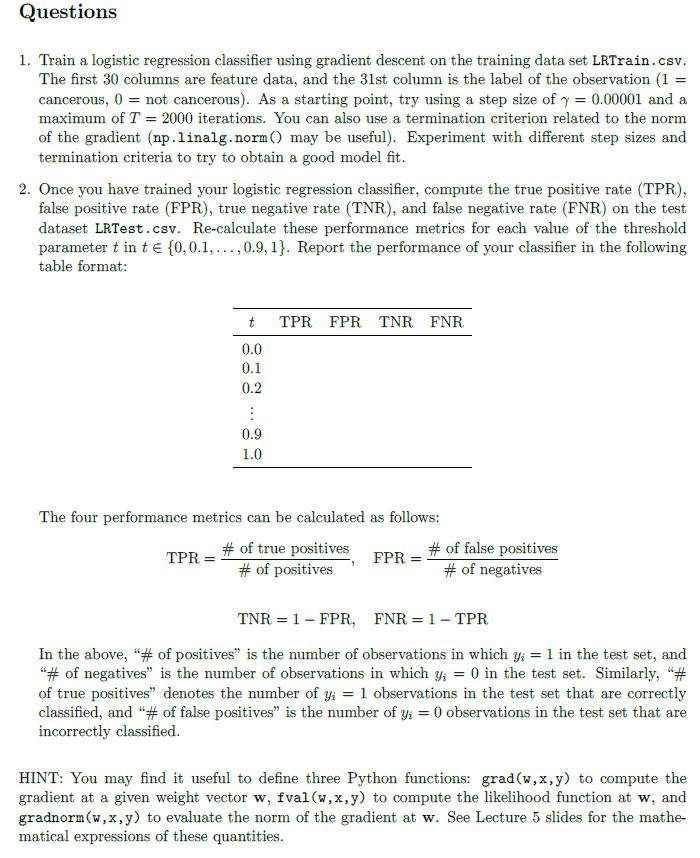

### Question 2 ###

# Use the (incomplete) code below as a starting point

# Load test data

data = genfromtxt('LRTest.csv', delimiter=',',skip_header = 1)

n = data.shape[0]

d = data.shape[1]-1

# get feature data

x = data[:,0:d]

# get label data

y = data[:,d]

# set threshold

t = ??;

pred = np.zeros(n)

for i in range(n):

if 1/(1+np.exp(-np.dot(w_hat,x[i,:])))>t:

pred[i]=1

elif 1/(1+np.exp(-np.dot(w_hat,x[i,:]))) pred[i]=0

TP = np.zeros(n) # number of true positives

FP = np.zeros(n) # number of false positives

TN = np.zeros(n) # number of true negatives

FN = np.zeros(n) # number of false negatives

for i in range(n):

if pred[i]==1 and y[i] == 0:

FP[i]=1

elif pred[i]==0 and y[i] == 1:

FN[i]=1

elif pred[i]==1 and y[i] == 1:

TP[i]=1

elif pred[i]==0 and y[i] == 0:

TN[i]=1

# Now use TP, FP, TN, and FN to calculate TPR, FPR, TNR, and FNR.

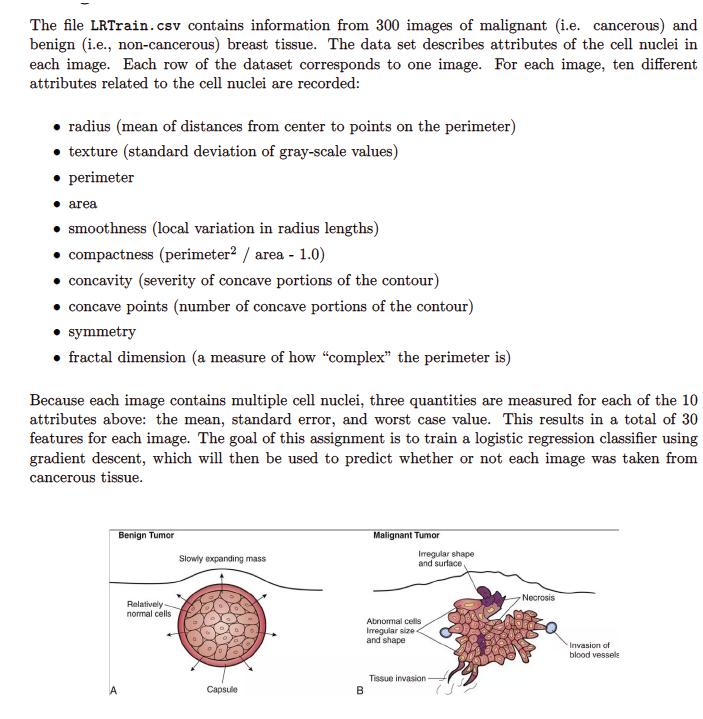

The file LRTrain.csv contains information from 300 images of malignant (i.e. cancerous) and benign (i.e., non-cancerous) breast tissue. The data set describes attributes of the cell nuclei in each image. Each row of the dataset corresponds to one image. For each image, ten different attributes related to the cell nuclei are recorded: radius (mean of distances from center to points on the perimeter) texture (standard deviation of gray-scale values) perimeter area smoothness (local variation in radius lengths) compactness (perimeter2 / area - 1.0) concavity (severity of concave portions of the contour) concave points (number of concave portions of the contour) symmetry fractal dimension (a measure of how "complex" the perimeter is) Because each image contains multiple cell nuclei, three quantities are measured for each of the 10 attributes above: the mean, standard error, and worst case value. This results in a total of 30 features for each image. The goal of this assignment is to train a logistic regression classifier using gradient descent, which will then be used to predict whether or not each image was taken from cancerous tissue. Benign Tumor Relatively normal cells Slowly expanding mass D Capsule B Malignant Tumor Imegular shape and surface, Abnormal cells Irregular size and shape Tissue invasion- Necrosis Invasion of blood vessels

Step by Step Solution

3.51 Rating (158 Votes )

There are 3 Steps involved in it

Answer You need to fill in the blanks in the provided code for gradient descent optimization Heres t... View full answer

Get step-by-step solutions from verified subject matter experts