Question: Latent semantic indexing is an SVD-based technique that can be used to discover text documents similar to each other. Assume that we are given a

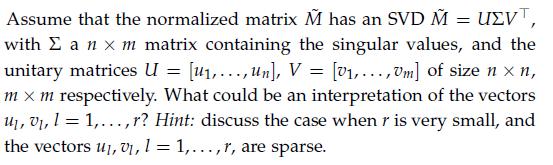

Latent semantic indexing is an SVD-based technique that can be used to discover text documents similar to each other. Assume that we are given a set of m documents D1, . . . , Dm. Using a “bag-of-words” technique described in Example 2.1, we can represent each document Dj is described by an n-vector dj, where n is the total number of distinct words appearing in the whole corpus. In this exercise, we assume that the vectors dj are constructed as follows: dj(i) = 1 if word i appears in document Dj, and 0 otherwise. We refer to the n x m matrix![]() as the “raw” term-by-document matrix. We will also use a normalnormalized9 version of that matrix

as the “raw” term-by-document matrix. We will also use a normalnormalized9 version of that matrix![]()

![]()

Assume we are given another document, referred to as the “query document,” which is not part of the collection. We describe that query document as a n-dimensional vector q, with zeros everywhere, except a 1 at indices corresponding to the terms that appear in the query. We seek to retrieve documents that are “most similar” to the query, in some sense. We denote by ![]() the normalized vector

the normalized vector![]()

1. A first approach is to select the documents that contain the largest number of terms in common with the query document. Explain how to implement this approach, based on a certain matrix-vector product, which you will determine.

2. Another approach is to find the closest document by selecting the index j such that ![]() is the smallest. This approach can introduce some biases, if for example the query document is much shorter than the other documents. Hence a measure of similarity based on the normalized vectors,

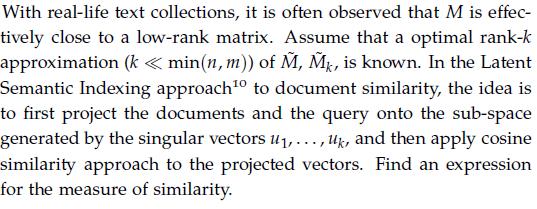

is the smallest. This approach can introduce some biases, if for example the query document is much shorter than the other documents. Hence a measure of similarity based on the normalized vectors,![]() has been proposed, under the name of “cosine similarity”. Justify the use of this name for that method, and provide a formulation based on a certain matrix-vector product, which you will determine.

has been proposed, under the name of “cosine similarity”. Justify the use of this name for that method, and provide a formulation based on a certain matrix-vector product, which you will determine.

3.

4.

M = [d,..., dm]

Step by Step Solution

3.31 Rating (142 Votes )

There are 3 Steps involved in it

1 2 With the normalized vector corresponding to a generic document we have 3 We can write which can ... View full answer

Get step-by-step solutions from verified subject matter experts