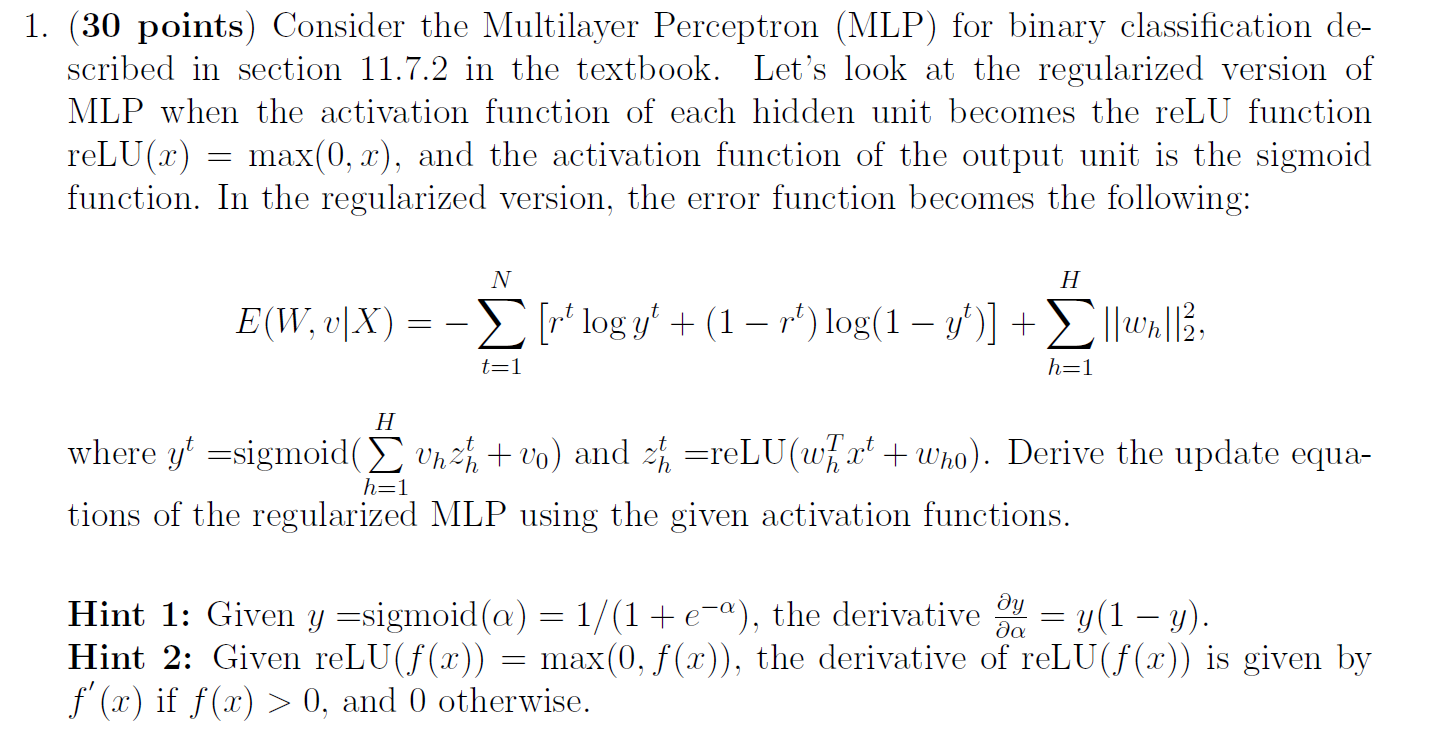

Question: 1. (30 points) Consider the Multilayer Perceptron (MLP) for binary classification de- scribed in section 11.7.2 in the textbook. Let's look at the regularized version

Step by Step Solution

There are 3 Steps involved in it

1 Expert Approved Answer

Step: 1 Unlock

Question Has Been Solved by an Expert!

Get step-by-step solutions from verified subject matter experts

Step: 2 Unlock

Step: 3 Unlock