Question: A data centre design typically comprises 3 security tiers with the application functionality split between servers in each tier as shown in following diagram.

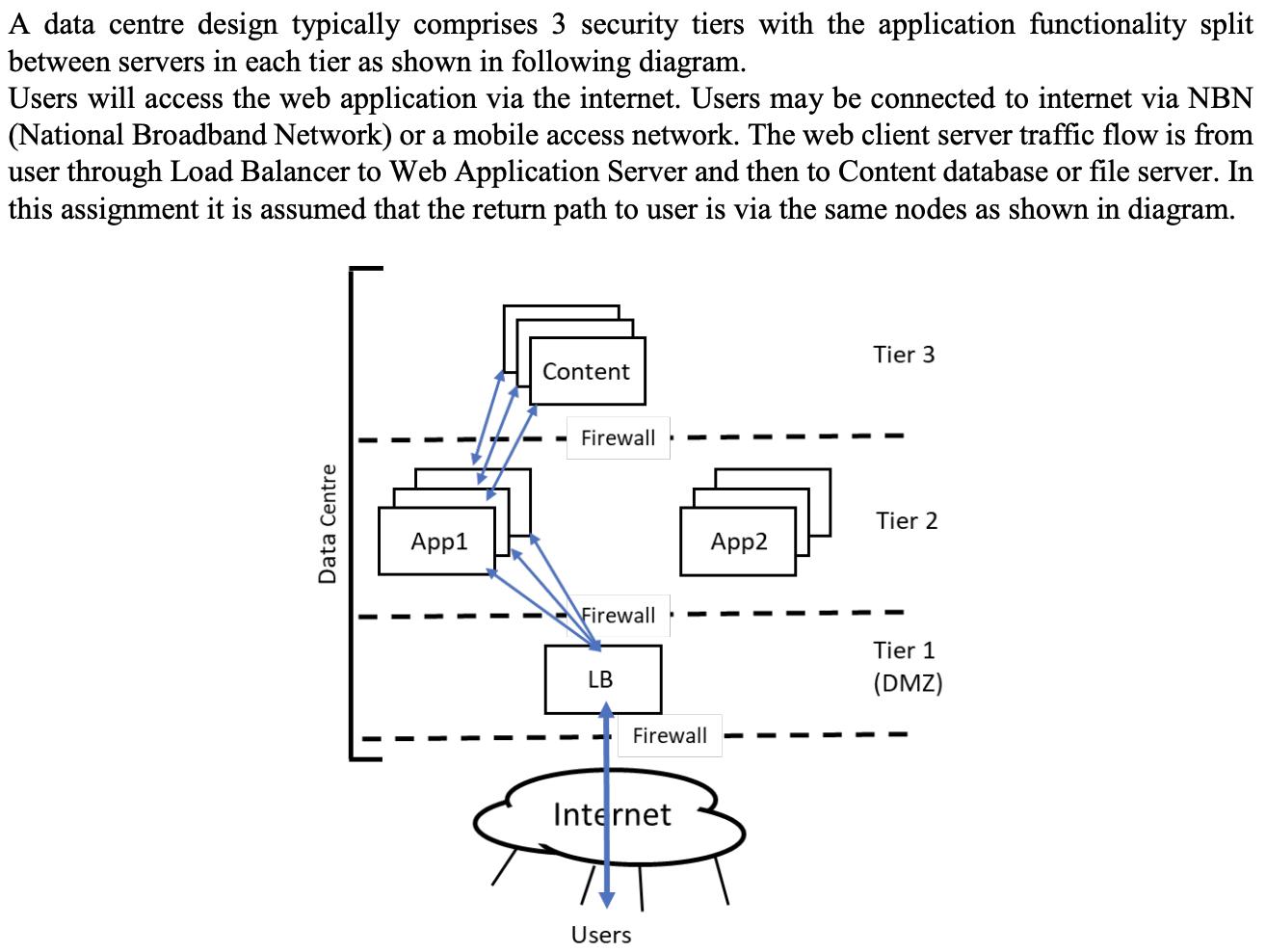

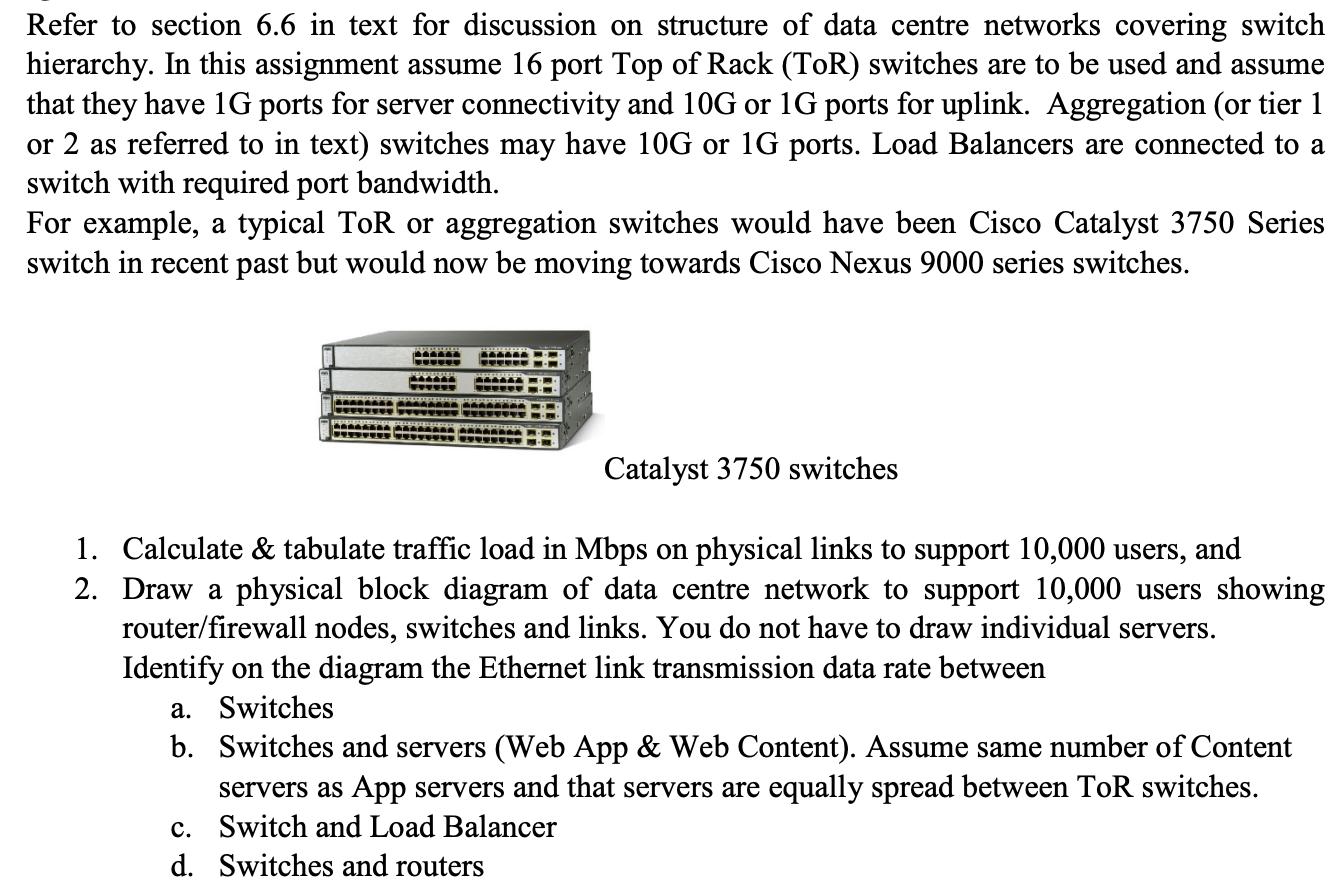

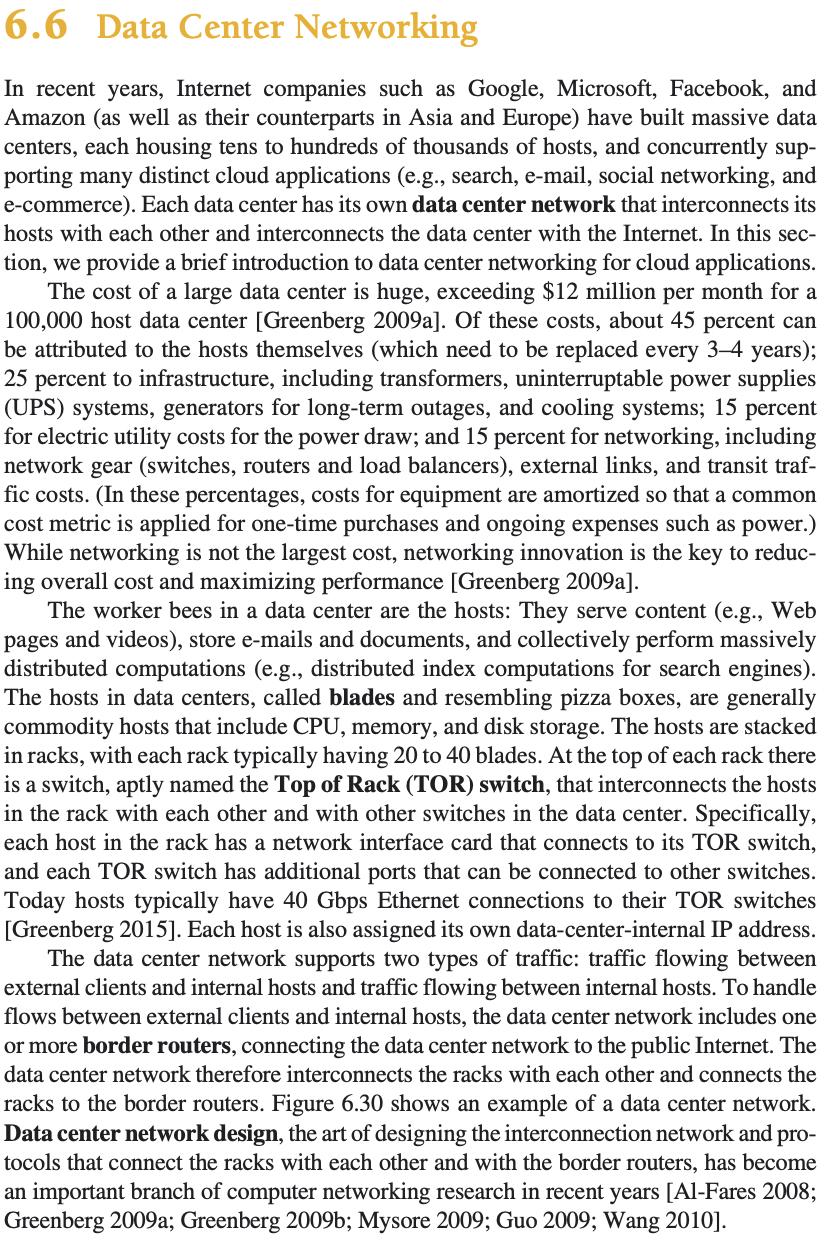

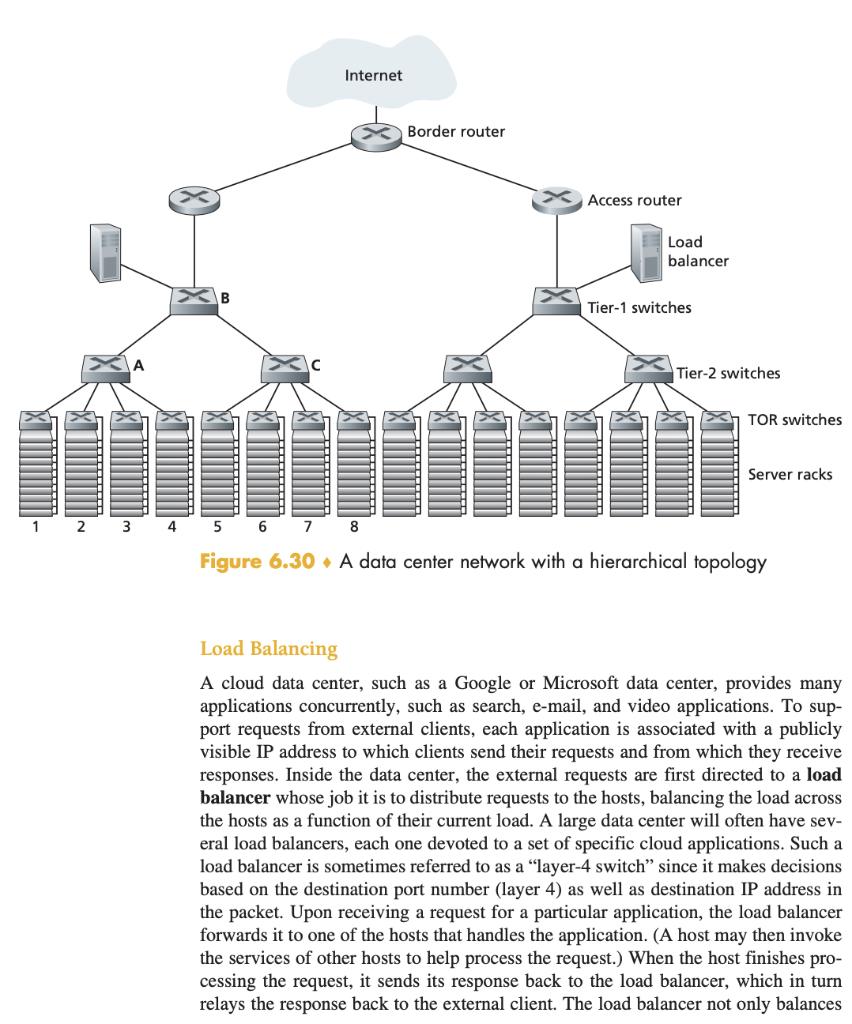

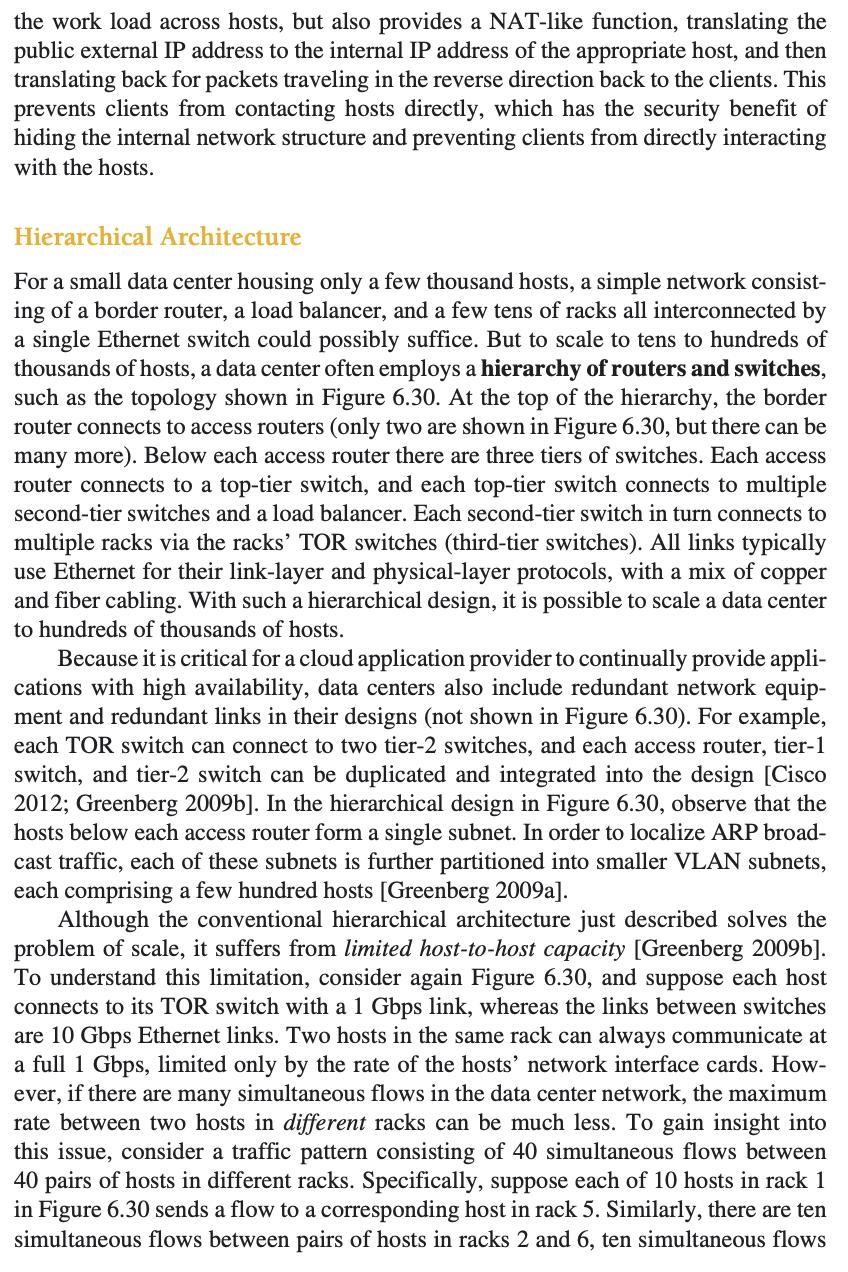

A data centre design typically comprises 3 security tiers with the application functionality split between servers in each tier as shown in following diagram. Users will access the web application via the internet. Users may be connected to internet via NBN (National Broadband Network) or a mobile access network. The web client server traffic flow is from user through Load Balancer to Web Application Server and then to Content database or file server. In this assignment it is assumed that the return path to user is via the same nodes as shown in diagram. Data Centre App1 Content Firewall Firewall LB Firewall Internet Users App2 Tier 3 Tier 2 Tier 1 (DMZ) In this network design VLANs (Virtual Local Area Network) will be used on ethernet switch nodes and connected to combined router/firewall nodes. As shown in the diagram the web application deployment comprises: 1. Load Balancers 2. Web Application Servers 3. Web Content Database or File Servers While user traffic load varies with time of day, the network design needs to support traffic load at peak time of day. At peak traffic time of day, it is assumed that 1. Each user makes 1 web request per minute (i.e. an average per user request rate of 0.0167 requests per second) and that each request comprises 80 KBytes of data. User requests are assumed to be uniformly distributed. 2. Web servers are able to handle 15 requests per second. 3. Web server sends 1.0 MByte of data (including http protocol overhead) in response to each user request and that Web Content Server likewise responds with 1.0 MByte (including protocol overhead) to Web Application Server. Refer to section 6.6 in text for discussion on structure of data centre networks covering switch hierarchy. In this assignment assume 16 port Top of Rack (ToR) switches are to be used and assume that they have 1G ports for server connectivity and 10G or 1G ports for uplink. Aggregation (or tier 1 or 2 as referred to in text) switches may have 10G or 1G ports. Load Balancers are connected to a switch with required port bandwidth. For example, a typical ToR or aggregation switches would have been Cisco Catalyst 3750 Series switch in recent past but would now be moving towards Cisco Nexus 9000 series switches. AAAAA BEARGAA BAAAAA AAA AAAAAAA THENTIT T Catalyst 3750 switches 1. Calculate & tabulate traffic load in Mbps on physical links to support 10,000 users, and 2. Draw a physical block diagram of data centre network to support 10,000 users showing router/firewall nodes, switches and links. You do not have to draw individual servers. Identify on the diagram the Ethernet link transmission data rate between a. Switches b. Switches and servers (Web App & Web Content). Assume same number of Content servers as App servers and that servers are equally spread between ToR switches. c. Switch and Load Balancer d. Switches and routers 6.6 Data Center Networking In recent years, Internet companies such as Google, Microsoft, Facebook, and Amazon (as well as their counterparts in Asia and Europe) have built massive data centers, each housing tens to hundreds of thousands of hosts, and concurrently sup- porting many distinct cloud applications (e.g., search, e-mail, social networking, and e-commerce). Each data center has its own data center network that interconnects its hosts with each other and interconnects the data center with the Internet. In this sec- tion, we provide a brief introduction to data center networking for cloud applications. The cost of a large data center is huge, exceeding $12 million per month for a 100,000 host data center [Greenberg 2009a]. Of these costs, about 45 percent can be attributed to the hosts themselves (which need to be replaced every 3-4 years); 25 percent to infrastructure, including transformers, uninterruptable power supplies (UPS) systems, generators for long-term outages, and cooling systems; 15 percent for electric utility costs for the power draw; and 15 percent for networking, including network gear (switches, routers and load balancers), external links, and transit traf- fic costs. (In these percentages, costs for equipment are amortized so that a common cost metric is applied for one-time purchases and ongoing expenses such as power.) While networking is not the largest cost, networking innovation is the key to reduc- ing overall cost and maximizing performance [Greenberg 2009a]. The worker bees in a data center are the hosts: They serve content (e.g., Web pages and videos), store e-mails and documents, and collectively perform massively distributed computations (e.g., distributed index computations for search engines). The hosts in data centers, called blades and resembling pizza boxes, are generally commodity hosts that include CPU, memory, and disk storage. The hosts are stacked in racks, with each rack typically having 20 to 40 blades. At the top of each rack there is a switch, aptly named the Top of Rack (TOR) switch, that interconnects the hosts in the rack with each other and with other switches in the data center. Specifically, each host in the rack has a network interface card that connects to its TOR switch, and each TOR switch has additional ports that can be connected to other switches. Today hosts typically have 40 Gbps Ethernet connections to their TOR switches [Greenberg 2015]. Each host is also assigned its own data-center-internal IP address. The data center network supports two types of traffic: traffic flowing between external clients and internal hosts and traffic flowing between internal hosts. To handle flows between external clients and internal hosts, the data center network includes one or more border routers, connecting the data center network to the public Internet. The data center network therefore interconnects the racks with each other and connects the racks to the border routers. Figure 6.30 shows an example of a data center network. Data center network design, the art of designing the interconnection network and pro- tocols that connect the racks with each other and with the border routers, has become an important branch of computer networking research in recent years [Al-Fares 2008; Greenberg 2009a; Greenberg 2009b; Mysore 2009; Guo 2009; Wang 2010]. 1 5 6 7 Internet Border router Access router Load balancer Tier-1 switches Tier-2 switches TOR switches Server racks 8 Figure 6.30 A data center network with a hierarchical topology Load Balancing A cloud data center, such as a Google or Microsoft data center, provides many applications concurrently, such as search, e-mail, and video applications. To sup- port requests from external clients, each application is associated with a publicly visible IP address to which clients send their requests and from which they receive responses. Inside the data center, the external requests are first directed to a load balancer whose job it is to distribute requests to the hosts, balancing the load across the hosts as a function of their current load. A large data center will often have sev- eral load balancers, each one devoted to a set of specific cloud applications. Such a load balancer is sometimes referred to as a "layer-4 switch" since it makes decisions based on the destination port number (layer 4) as well as destination IP address in the packet. Upon receiving a request for a particular application, the load balancer forwards it to one of the hosts that handles the application. (A host may then invoke the services of other hosts to help process the request.) When the host finishes pro- cessing the request, it sends its response back to the load balancer, which in turn relays the response back to the external client. The load balancer not only balances the work load across hosts, but also provides a NAT-like function, translating the public external IP address to the internal IP address of the appropriate host, and then translating back for packets traveling in the reverse direction back to the clients. This prevents clients from contacting hosts directly, which has the security benefit of hiding the internal network structure and preventing clients from directly interacting with the hosts. Hierarchical Architecture For a small data center housing only a few thousand hosts, a simple network consist- ing of a border router, a load balancer, and a few tens of racks all interconnected by a single Ethernet switch could possibly suffice. But to scale to tens to hundreds of thousands of hosts, a data center often employs a hierarchy of routers and switches, such as the topology shown in Figure 6.30. At the top of the hierarchy, the border router connects to access routers (only two are shown in Figure 6.30, but there can be many more). Below each access router there are three tiers of switches. Each access router connects to a top-tier switch, and each top-tier switch connects to multiple second-tier switches and a load balancer. Each second-tier switch in turn connects to multiple racks via the racks' TOR switches (third-tier switches). All links typically use Ethernet for their link-layer and physical-layer protocols, with a mix of copper and fiber cabling. With such a hierarchical design, it is possible to scale a data center to hundreds of thousands of hosts. Because it is critical for a cloud application provider to continually provide appli- cations with high availability, data centers also include redundant network equip- ment and redundant links in their designs (not shown in Figure 6.30). For example, each TOR switch can connect to two tier-2 switches, and each access router, tier-1 switch, and tier-2 switch can be duplicated and integrated into the design [Cisco 2012; Greenberg 2009b]. In the hierarchical design in Figure 6.30, observe that the hosts below each access router form a single subnet. In order to localize ARP broad- cast traffic, each of these subnets is further partitioned into smaller VLAN subnets, each comprising a few hundred hosts [Greenberg 2009a]. Although the conventional hierarchical architecture just described solves the problem of scale, it suffers from limited host-to-host capacity [Greenberg 2009b]. To understand this limitation, consider again Figure 6.30, and suppose each host connects to its TOR switch with a 1 Gbps link, whereas the links between switches are 10 Gbps Ethernet links. Two hosts in the same rack can always communicate at a full 1 Gbps, limited only by the rate of the hosts' network interface cards. How- ever, if there are many simultaneous flows in the data center network, the maximum rate between two hosts in different racks can be much less. To gain insight into this issue, consider a traffic pattern consisting of 40 simultaneous flows between 40 pairs of hosts in different racks. Specifically, suppose each of 10 hosts in rack 1 in Figure 6.30 sends a flow to a corresponding host in rack 5. Similarly, there are ten simultaneous flows between pairs of hosts in racks 2 and 6, ten simultaneous flows between racks 3 and 7, and ten simultaneous flows between racks 4 and 8. If each flow evenly shares a link's capacity with other flows traversing that link, then the 40 flows crossing the 10 Gbps A-to-B link (as well as the 10 Gbps B-to-C link) will each only receive 10 Gbps / 40 = 250 Mbps, which is significantly less than the 1 Gbps network interface card rate. The problem becomes even more acute for flows between hosts that need to travel higher up the hierarchy. One possible solution to this limitation is to deploy higher-rate switches and routers. But this would signifi- cantly increase the cost of the data center, because switches and routers with high port speeds are very expensive. Supporting high-bandwidth host-to-host communication is important because a key requirement in data centers is flexibility in placement of computation and ser- vices [Greenberg 2009b; Farrington 2010]. For example, a large-scale Internet search engine may run on thousands of hosts spread across multiple racks with significant bandwidth requirements between all pairs of hosts. Similarly, a cloud computing service such as EC2 may wish to place the multiple virtual machines comprising a customer's service on the physical hosts with the most capacity irrespective of their location in the data center. If these physical hosts are spread across multiple racks, network bottlenecks as described above may result in poor performance. Trends in Data Center Networking In order to reduce the cost of data centers, and at the same time improve their delay and throughput performance, Internet cloud giants such as Google, Facebook, Amazon, and Microsoft are continually deploying new data center network designs. Although these designs are proprietary, many important trends can nevertheless be identified. One such trend is to deploy new interconnection architectures and network protocols that overcome the drawbacks of the traditional hierarchical designs. One such approach is to replace the hierarchy of switches and routers with a fully con- nected topology [Facebook 2014; Al-Fares 2008; Greenberg 2009b; Guo 2009], such as the topology shown in Figure 6.31. In this design, each tier-1 switch connects to all of the tier-2 switches so that (1) host-to-host traffic never has to rise above the switch tiers, and (2) with n tier-1 switches, between any two tier-2 switches there are n disjoint paths. Such a design can significantly improve the host-to-host capacity. To see this, consider again our example of 40 flows. The topology in Figure 6.31 can handle such a flow pattern since there are four distinct paths between the first tier-2 switch and the second tier-2 switch, together providing an aggregate capacity of 40 Gbps between the first two tier-2 switches. Such a design not only alleviates the host-to-host capacity limitation, but also creates a more flexible computation and ser- vice environment in which communication between any two racks not connected to the same switch is logically equivalent, irrespective of their locations in the data center. Another major trend is to employ shipping container-based modular data cent- ers (MDCs) [YouTube 2009; Waldrop 2007]. In an MDC, a factory builds, within a 2 3 4 8 Figure 6.31 Highly interconnected data network topology 6 7 standard 12-meter shipping container, a "mini data center" and ships the container to the data center location. Each container has up to a few thousand hosts, stacked in tens of racks, which are packed closely together. At the data center location, mul- tiple containers are interconnected with each other and also with the Internet. Once a prefabricated container is deployed at a data center, it is often difficult to service. Thus, each container is designed for graceful performance degradation: as compo- nents (servers and switches) fail over time, the container continues to operate but with degraded performance. When many components have failed and performance has dropped below a threshold, the entire container is removed and replaced with a fresh one. Building a data center out of containers creates new networking challenges. With an MDC, there are two types of networks: the container-internal networks within each of the containers and the core network connecting each container [Guo 2009; Farrington 2010]. Within each container, at the scale of up to a few thousand hosts, it is possible to build a fully connected network (as described above) using inexpensive commodity Gigabit Ethernet switches. However, the design of the core network, interconnecting hundreds to thousands of containers while providing high host-to-host bandwidth across containers for typical workloads, remains a chal- lenging problem. A hybrid electrical/optical switch architecture for interconnecting the containers is proposed in [Farrington 2010]. When using highly interconnected topologies, one of the major issues is design- ing routing algorithms among the switch One possibility [Greenberg 2009b] is to use a form of random routing. Another possibility [Guo 2009] is to deploy mul- tiple network interface cards in each host, connect each host to multiple low-cost commodity switches, and allow the hosts themselves to intelligently route traffic among the switches. Variations and extensions of these approaches are currently being deployed in contemporary data centers. Tier-1 switches Tier-2 switches TOR switches Server racks Another important trend is that large cloud providers are increasingly building or customizing just about everything that is in their data centers, including network adapters, switches routers, TORS, software, and networking protocols [Greenberg 2015, Singh 2015]. Another trend, pioneered by Amazon, is to improve reliability with "availability zones," which essentially replicate distinct data centers in different nearby buildings. By having the buildings nearby (a few kilometers apart), trans- actional data can be synchronized across the data centers in the same availability zone while providing fault tolerance [Amazon 2014]. Many more innovations in data center design are likely to continue to come; interested readers are encouraged to see the recent papers and videos on data center network design.

Step by Step Solution

3.31 Rating (151 Votes )

There are 3 Steps involved in it

To calculate and tabulate the traffic load in Mbps on physical links to support 10000 users in the data center network we need to consider various fac... View full answer

Get step-by-step solutions from verified subject matter experts