Question: Consider the quadratic polynomial f(x) = ax2, with free parameter a > 0. Clearly, this quadratic has a global minimum at x = =

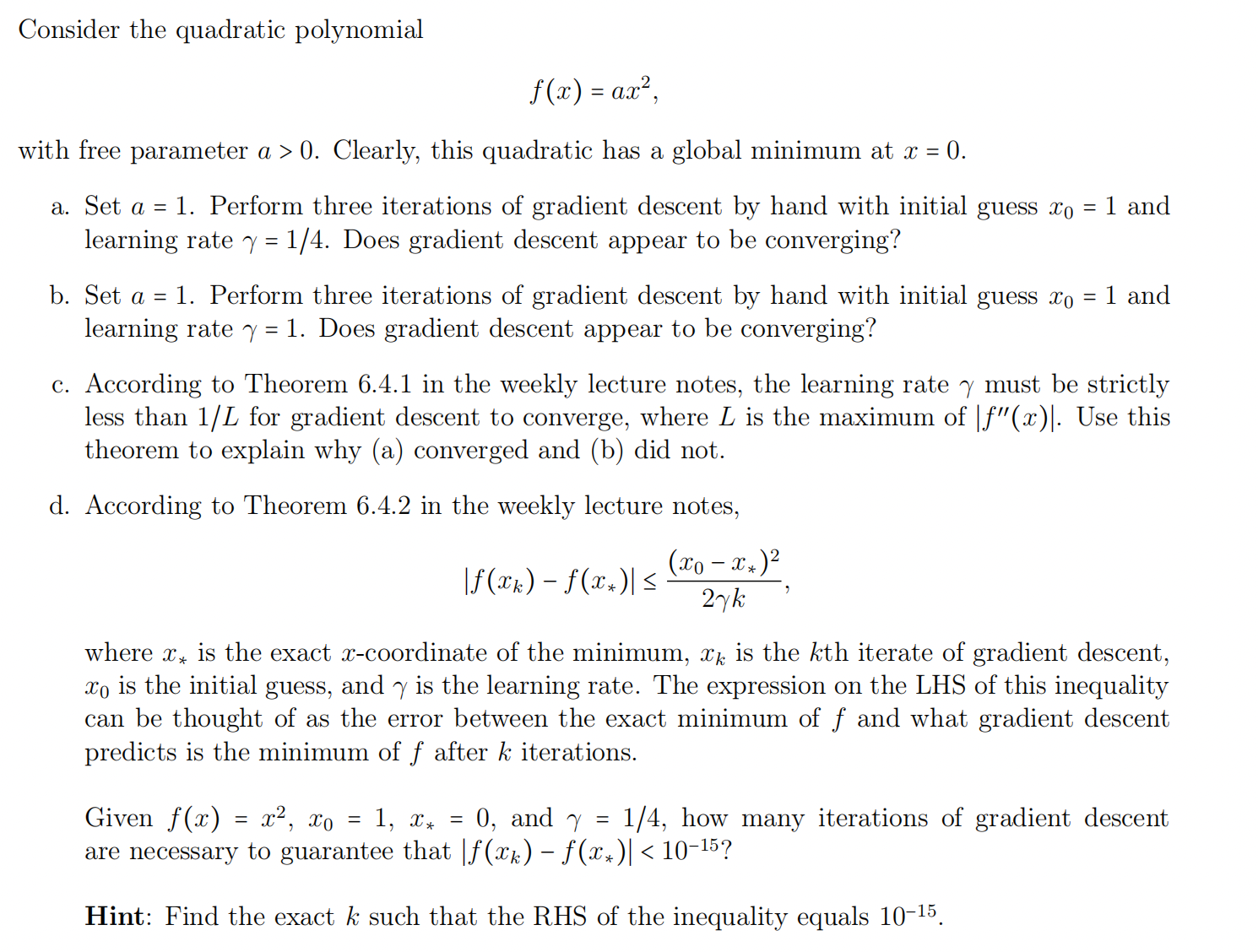

Consider the quadratic polynomial f(x) = ax2, with free parameter a > 0. Clearly, this quadratic has a global minimum at x = = 0. a. Set a = 1. Perform three iterations of gradient descent by hand with initial guess xo learning rate = 1/4. Does gradient descent appear to be converging? b. Set a = 1. Perform three iterations of gradient descent by hand with initial guess xo learning rate = 1. Does gradient descent appear to be converging? - 1 and = 1 and c. According to Theorem 6.4.1 in the weekly lecture notes, the learning rate y must be strictly less than 1/L for gradient descent to converge, where L is the maximum of |"(x)|. Use this theorem to explain why (a) converged and (b) did not. d. According to Theorem 6.4.2 in the weekly lecture notes, - |f(xk) f(x)| (xo-x*) 2yk where x is the exact x-coordinate of the minimum, x is the kth iterate of gradient descent, xo is the initial guess, and y is the learning rate. The expression on the LHS of this inequality can be thought of as the error between the exact minimum of and what gradient descent predicts is the minimum of after k iterations. Given f(x) = x, xo = 1, x = 0, and Y = 1/4, how many iterations of gradient descent are necessary to guarantee that |f(xk) f(xx)| < 10-15? Hint: Find the exact k such that the RHS of the inequality equals 10-15.

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts