Question: Please answer a and b. A Markov chain has two states, A and B, with transitions as follows. If the MC is at state A

Please answer a and b.

Please answer a and b.

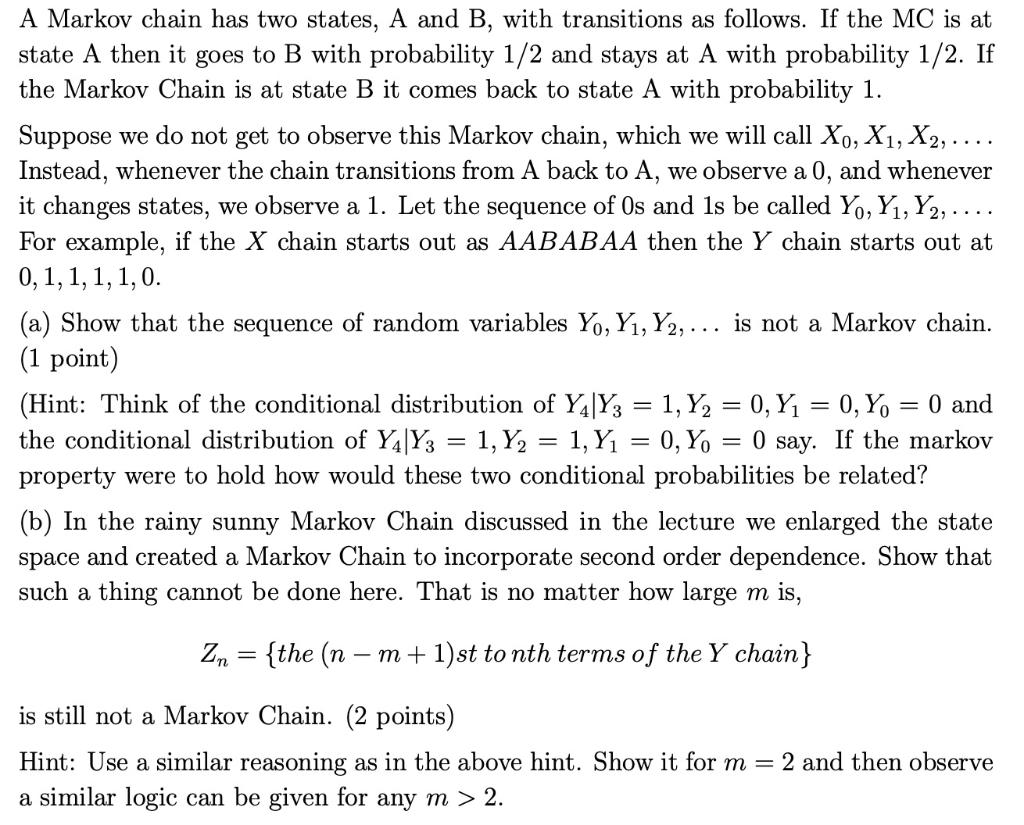

A Markov chain has two states, A and B, with transitions as follows. If the MC is at state A then it goes to B with probability 1/2 and stays at A with probability 1/2. If the Markov Chain is at state B it comes back to state A with probability 1. Suppose we do not get to observe this Markov chain, which we will call X., X1, X2, .... Instead, whenever the chain transitions from A back to A, we observe a 0, and whenever it changes states, we observe a 1. Let the sequence of Os and 1s be called Y., Y1,Y2, .... For example, if the X chain starts out as AABABAA then the Y chain starts out at 0,1,1,1,1,0. (a) Show that the sequence of random variables Yo, Y1, Y2, ... is not a Markov chain. (1 point) (Hint: Think of the conditional distribution of Y4|Y3 = 1, Y2 = 0,Y1 = 0, Y0 = 0 and the conditional distribution of Y4|Y3 = 1, Y2 = 1, Y1 = 0,70 = 0 say. If the markov property were to hold how would these two conditional probabilities be related? (b) In the rainy sunny Markov Chain discussed in the lecture we enlarged the state space and created a Markov Chain to incorporate second order dependence. Show that such a thing cannot be done here. That is no matter how large m is, Zn = {the (n = m + 1)st to nth terms of the Y chain} is still not a Markov Chain. (2 points) Hint: Use a similar reasoning as in the above hint. Show it for m = 2 and then observe a similar logic can be given for any m > 2. A Markov chain has two states, A and B, with transitions as follows. If the MC is at state A then it goes to B with probability 1/2 and stays at A with probability 1/2. If the Markov Chain is at state B it comes back to state A with probability 1. Suppose we do not get to observe this Markov chain, which we will call X., X1, X2, .... Instead, whenever the chain transitions from A back to A, we observe a 0, and whenever it changes states, we observe a 1. Let the sequence of Os and 1s be called Y., Y1,Y2, .... For example, if the X chain starts out as AABABAA then the Y chain starts out at 0,1,1,1,1,0. (a) Show that the sequence of random variables Yo, Y1, Y2, ... is not a Markov chain. (1 point) (Hint: Think of the conditional distribution of Y4|Y3 = 1, Y2 = 0,Y1 = 0, Y0 = 0 and the conditional distribution of Y4|Y3 = 1, Y2 = 1, Y1 = 0,70 = 0 say. If the markov property were to hold how would these two conditional probabilities be related? (b) In the rainy sunny Markov Chain discussed in the lecture we enlarged the state space and created a Markov Chain to incorporate second order dependence. Show that such a thing cannot be done here. That is no matter how large m is, Zn = {the (n = m + 1)st to nth terms of the Y chain} is still not a Markov Chain. (2 points) Hint: Use a similar reasoning as in the above hint. Show it for m = 2 and then observe a similar logic can be given for any m > 2

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts