Question: 3. Suppose you have a dataset {(x (1), y()), ..., (x(N), y(N))} on which you wish to fit a linear regression model y xB

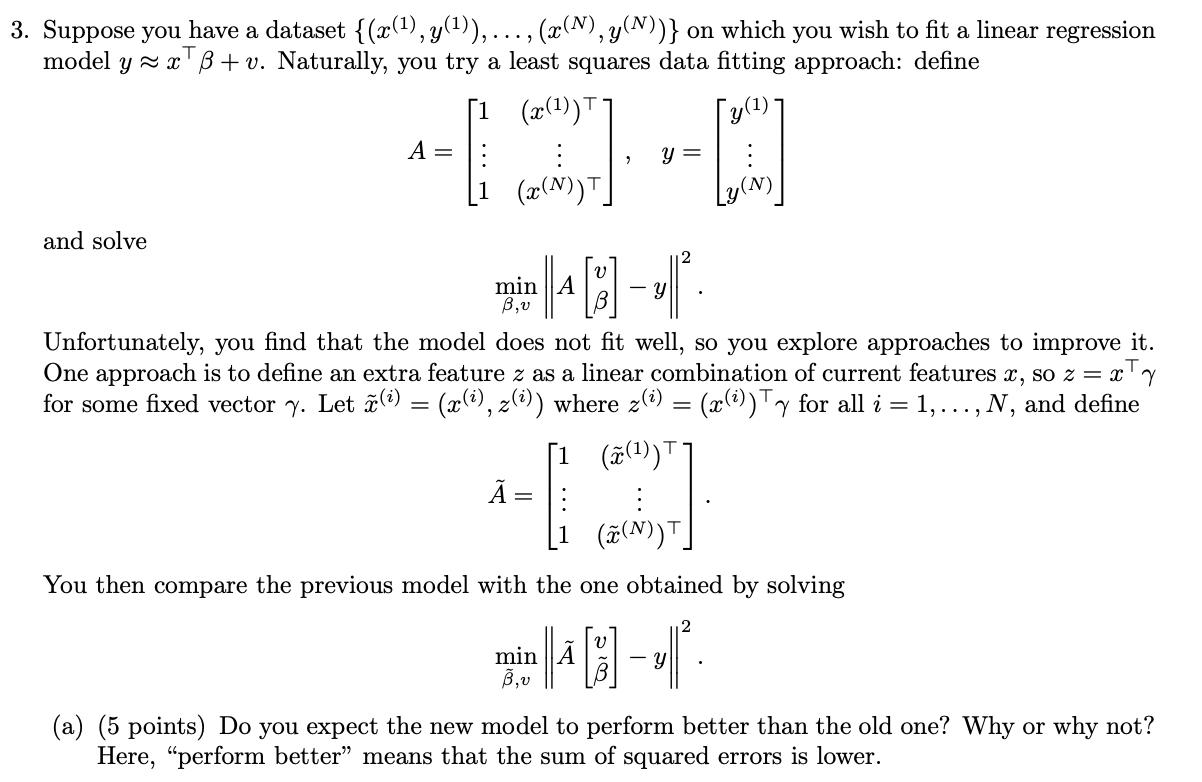

3. Suppose you have a dataset {(x (1), y()), ..., (x(N), y(N))} on which you wish to fit a linear regression model y xB + v. Naturally, you try a least squares data fitting approach: define and solve [1 (x()) A = y = (x(N)) 18- min A Unfortunately, you find that the model does not fit well, so you explore approaches to improve it. One approach is to define an extra feature z as a linear combination of current features x, so z = x + y for some fixed vector y. Let (i) = (x (), 2(i)) where z(i) = (x(i)) Ty for all i = 1,..., N, and define (x(1))] = You then compare the previous model with the one obtained by solving 2 min B,v -Y (a) (5 points) Do you expect the new model to perform better than the old one? Why or why not? Here, "perform better" means that the sum of squared errors is lower.

Step by Step Solution

There are 3 Steps involved in it

Get step-by-step solutions from verified subject matter experts